Artificial Intelligence has fundamentally transformed machine translation from a novelty into a sophisticated enterprise technology capable of processing millions of words per hour with remarkable accuracy. This comprehensive technical analysis examines the underlying architectures, algorithms, quality evaluation frameworks, and implementation strategies that power modern AI translation systems—from neural machine translation engines to large language models—providing decision-makers with the technical understanding required to evaluate, select, and deploy these systems effectively.

Executive Summary: The Technical Landscape of AI Translation

Technical Overview: Modern AI translation systems employ deep neural network architectures—primarily encoder-decoder models with attention mechanisms—to achieve translation quality approaching human parity for general content. The technology stack has evolved through three major paradigms: Statistical Machine Translation (SMT, 2000-2016), Neural Machine Translation (NMT, 2016-2022), and the current Large Language Model (LLM) era (2022-present), each representing order-of-magnitude improvements in fluency, accuracy, and contextual understanding.

Defining AI Translation: At its core, AI translation refers to the automated conversion of text or speech from one natural language to another using artificial intelligence techniques. Unlike earlier rule-based approaches that relied on handcrafted grammatical rules and bilingual dictionaries, modern AI systems learn translation patterns directly from vast corpora of parallel text—sentences and documents that exist in multiple languages. This data-driven approach enables systems to capture linguistic nuances, idiomatic expressions, and domain-specific terminology that would be impossible to encode manually.

The technological evolution follows a clear trajectory of increasing sophistication:

- Rule-Based MT (1950s-1990s): Handcrafted linguistic rules, limited vocabulary, rigid syntax—functional but brittle

- Statistical MT (2000s-2016): Data-driven probabilistic models, phrase-based translation, improved fluency through example learning

- Neural MT (2016-2022): Deep learning architectures, encoder-decoder models, attention mechanisms—achieving near-human quality for major language pairs

- LLM-Based Translation (2022-present): Large language models with emergent translation capabilities, in-context learning, few-shot prompting—enabling zero-shot translation and superior context handling

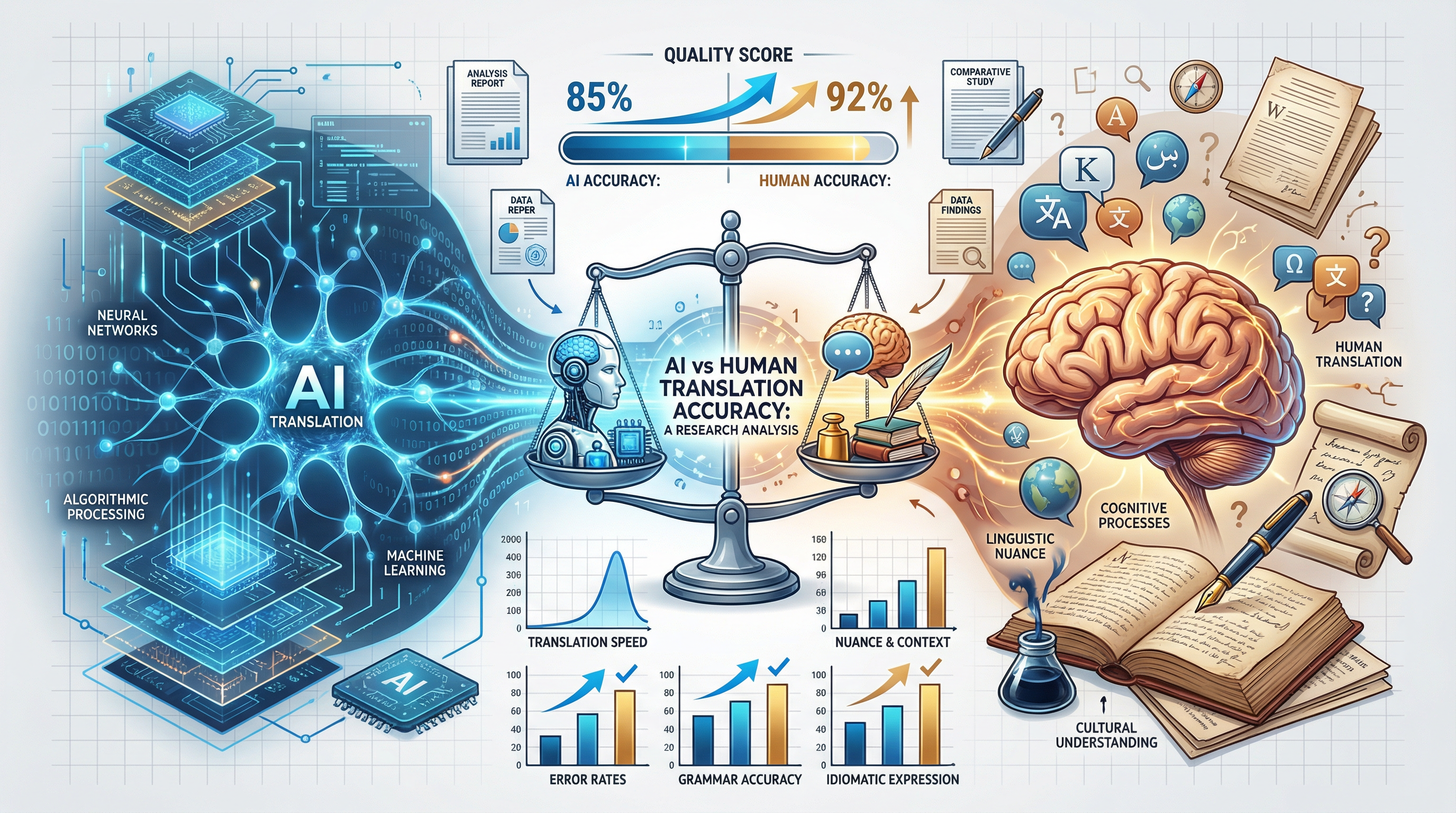

Current Capabilities: State-of-the-art systems demonstrate remarkable technical achievements. Google Translate processes over 100 billion words daily across 243 languages. DeepL achieves BLEU scores of 40-50 for European language pairs—comparable to professional human translation for general content. GPT-4 demonstrates emergent translation capabilities across 100+ languages without specific translation training, handling context windows up to 128,000 tokens for long document coherence.

Technical Limitations: Despite these advances, significant constraints remain. Low-resource languages (those with limited training data) continue to exhibit quality gaps of 30-50% compared to high-resource pairs. Domain-specific content—particularly legal, medical, and creative texts—requires specialized fine-tuning or human post-editing to achieve acceptable quality. Long-range dependencies beyond 1,000 tokens often result in coherence degradation. Cultural nuances, humor, and emotionally charged content remain challenging for even the most advanced systems.

When to Use AI vs. Human Translation:Technical decision frameworks increasingly rely on quality-cost-time tradeoffs. AI translation excels for high-volume, time-sensitive, general-domain content where 95%+ accuracy is sufficient. Human translation remains essential for certified documents, creative works, legal contracts, medical records, and any content where errors carry significant liability. The emerging best practice employs hybrid workflows—AI for first-pass translation with targeted human review—optimizing both efficiency and quality.

The Technology Stack: How AI Translation Works

Neural Machine Translation (NMT) Architecture

Neural Machine Translation represents the dominant technical architecture for production translation systems. NMT models employ deep neural networks—specifically encoder-decoder architectures with attention mechanisms—to learn end-to-end mappings between languages. Unlike statistical approaches that break translation into discrete steps (alignment, translation, reordering), NMT processes entire sentences holistically, capturing complex dependencies and producing more fluent output.

Encoder-Decoder Models: The Foundational Architecture

The encoder-decoder architecture, introduced by Sutskever et al. (2014) and refined by Cho et al. (2014), forms the backbone of modern NMT systems. The architecture consists of two distinct neural network components working in sequence:

The Encoder: This component processes the source language input, typically implemented as a recurrent neural network (LSTM or GRU) in early architectures or as transformer blocks in modern implementations. The encoder reads the input sequence word by word (or subword by subword, using BPE tokenization) and compresses it into a fixed-length vector representation called the context vector or thought vector. This vector captures the semantic meaning of the entire source sentence in a dense numerical format that the decoder can process.

Early encoder implementations struggled with long sentences—the fixed-size context vector acted as an information bottleneck, losing important details from lengthy inputs. Modern transformer-based encoders overcome this limitation through self-attention mechanisms that maintain direct connections between all positions in the input sequence, preserving information regardless of distance.

The Decoder: The decoder generates the target language output, one token at a time, conditioned on both the context vector from the encoder and previously generated tokens. At each step, the decoder computes a probability distribution over the target vocabulary and selects the next token (typically using beam search or greedy decoding). The decoder is autoregressive—each generated token becomes input for the next generation step, creating a chain of dependencies that ensures grammatical coherence.

Encoder-Decoder Technical Specifications

- • Typical embedding dimension: 512-1024 dimensions per token

- • Hidden layer size: 512-2048 units (varies by model scale)

- • Number of layers: 6-24 transformer blocks (encoder + decoder)

- • Attention heads: 8-16 parallel attention mechanisms per layer

- • Vocabulary size: 32,000-64,000 subword tokens (BPE/SentencePiece)

- • Context window: 512-2,048 tokens (higher for modern architectures)

- • Parameters: 60M-600M for production models; 1B+ for large-scale systems

Attention Mechanisms: Solving the Bottleneck Problem

The attention mechanism, introduced by Bahdanau et al. (2015) and revolutionized by the Transformer architecture (Vaswani et al., 2017), addresses the critical limitation of fixed-length context vectors. Rather than compressing the entire source sentence into a single vector, attention allows the decoder to selectively focus on different parts of the input when generating each output token.

How Attention Works: At each decoding step, the attention mechanism computes alignment scores between the current decoder state and all encoder states. These scores determine how much each source token contributes to generating the current target token. The mechanism produces a weighted sum of encoder outputs—the context vector for that specific decoding step—allowing the model to dynamically attend to relevant source words.

Mathematically, attention computes three vectors for each token: Query (Q), Key (K), and Value (V). The query represents what the decoder is looking for; keys represent what each encoder token offers; values contain the actual information to be aggregated. The attention score is calculated as the dot product of query and key, scaled and normalized through softmax to produce attention weights. The output is the weighted sum of values.

Self-Attention: The Transformer architecture introduced self-attention, where each position in a sequence attends to all other positions in the same sequence. This enables the model to capture dependencies regardless of distance—crucial for handling long-range linguistic phenomena like anaphora resolution, subject-verb agreement across clauses, and discourse coherence. Self-attention operates in parallel across all positions, unlike recurrent networks that process sequentially, enabling significant speedups on modern hardware.

Multi-Head Attention: Parallel Perspective Processing

Multi-head attention extends the basic attention mechanism by running multiple attention operations in parallel—each with different learned linear projections of queries, keys, and values. A typical configuration uses 8 or 16 attention heads, each potentially learning to focus on different types of relationships: syntactic dependencies, semantic similarities, coreference links, or positional patterns.

The outputs from all heads are concatenated and linearly projected to produce the final attention output. This multi-perspective approach significantly enhances model capacity, allowing the system to capture diverse linguistic phenomena simultaneously. Research has shown that different heads specialize—some focus on positional relationships, others on syntactic dependencies, and others on semantic similarity—creating an ensemble of specialized attention mechanisms within a single model.

The Transformer Architecture: Attention Is All You Need

The Transformer architecture, introduced by Google researchers in the seminal 2017 paper "Attention Is All You Need," revolutionized NMT by eliminating recurrence entirely, replacing it with purely attention-based processing. The architecture consists of stacked encoder and decoder layers, each containing two sub-layers: multi-head self-attention and position-wise feed-forward networks.

Key Innovations:

- Positional Encoding: Since transformers process all positions in parallel, they lack inherent sequential information. Positional encodings inject position information using sinusoidal functions or learned embeddings, allowing the model to distinguish word order.

- Layer Normalization: Applied before each sub-layer (pre-norm) or after (post-norm), stabilizing training and enabling deeper networks.

- Residual Connections: Skip connections around each sub-layer, facilitating gradient flow through deep stacks (6-24 layers).

- Parallel Processing: Unlike RNNs that process token-by-token, transformers process entire sequences simultaneously, enabling efficient GPU utilization.

The Transformer architecture has become the de facto standard for NMT systems, forming the foundation for Google Neural Machine Translation (GNMT), Facebook's fairseq, Hugging Face transformers, and virtually all modern production systems. Its scalability— both in terms of model size and training data—has enabled the progression to billion- parameter models and beyond.

Subword Tokenization: Handling Vocabulary Complexity

Modern NMT systems don't operate on words directly—instead, they use subword tokenization to balance vocabulary size and coverage. Algorithms like Byte Pair Encoding (BPE) and SentencePiece learn to split rare words into frequent subword units, creating vocabularies of 32,000-64,000 tokens that can represent any text through composition.

For example, the word "unhappiness" might be tokenized as ["un", "happiness"] or ["un", "happy", "ness"], depending on the learned BPE merge rules. This approach handles morphologically rich languages (German, Finnish, Turkish), compound words, and rare terminology without requiring massive vocabularies. Tokenization is learned jointly with model training, ensuring optimal splits for the target translation task.

Large Language Models (LLMs) for Translation

Large Language Models—GPT-4, Claude, Gemini, and their counterparts—represent a paradigm shift in machine translation. These models demonstrate emergent translation capabilities as a byproduct of general language understanding training, without explicit translation objectives. Their scale (hundreds of billions to trillions of parameters), massive training corpora (trillions of tokens), and general-purpose architecture enable unprecedented translation flexibility and quality.

GPT-4, Claude, and Gemini Translation Capabilities

GPT-4 (OpenAI) demonstrates remarkable translation capabilities across 100+ languages, achieving BLEU scores competitive with specialized NMT systems on major language pairs. Its 128,000-token context window enables coherent translation of entire documents, maintaining consistency across sections. GPT-4 excels at handling context-dependent translations—disambiguating pronouns, maintaining terminology consistency, and preserving document-level coherence that challenges sentence-level NMT systems.

Claude (Anthropic) offers similar capabilities with emphasis on safety and accuracy. Claude's 200,000-token context window (as of 2024) enables translation of book-length documents while maintaining narrative coherence. The model demonstrates strong performance on low-resource languages and specialized domains, likely due to its training on diverse web content including technical documentation, academic papers, and multilingual publications.

Gemini (Google DeepMind) integrates Google's extensive multilingual resources, leveraging training data from Google Translate, web crawl data, and proprietary multilingual datasets. Gemini 1.5's 1-2 million token context window represents a qualitative leap, enabling translation of lengthy legal contracts, academic papers, or book chapters in a single pass with maintained coherence.

GPT-4

- • Context: 128,000 tokens

- • Languages: 100+ supported

- • Strengths: Context handling, fluency

- • Pricing: $10-30 per 1M tokens

Claude

- • Context: 200,000 tokens

- • Languages: 100+ supported

- • Strengths: Accuracy, safety

- • Pricing: $3-15 per 1M tokens

Gemini 1.5

- • Context: 1-2M tokens

- • Languages: 100+ supported

- • Strengths: Long documents, recall

- • Pricing: $3.50-7 per 1M tokens

Few-Shot Prompting Techniques

Few-shot prompting enables LLMs to adapt to specific translation requirements without model fine-tuning. By providing example translations in the prompt, users can guide the model toward desired style, terminology, and domain conventions. This technique proves particularly valuable for specialized domains where generic translation falls short.

Effective few-shot prompts typically include:

- Task Definition: Clear instruction specifying the translation direction and any constraints

- Example Pairs: 2-5 source-target examples demonstrating desired style and terminology

- Context: Domain information, audience description, or style guidelines

- Input to Translate: The actual source text for translation

Research indicates that 3-5 high-quality examples often suffice for domain adaptation, with diminishing returns beyond 10 examples. The quality of examples matters more than quantity—well-chosen examples covering key terminology and stylistic patterns outperform numerous mediocre examples.

Chain-of-Thought Translation

Chain-of-thought prompting—eliciting step-by-step reasoning before generating output— improves translation quality for complex, ambiguous, or culturally nuanced content. By prompting the model to "think through" translation decisions (e.g., "First, identify the domain. Next, disambiguate ambiguous terms. Then, consider cultural context."), chain-of-thought techniques activate deeper processing and reduce errors.

This approach proves especially valuable for:

- Idiomatic expressions requiring conceptual rather than literal translation

- Technical documents with specialized terminology

- Content with cultural references needing localization

- Texts with ambiguous pronouns or coreference chains

- Legal or regulatory content where precision is paramount

In-Context Learning Mechanisms

LLMs demonstrate in-context learning—the ability to adapt to new tasks or patterns based solely on information provided in the prompt, without parameter updates. For translation, this enables dynamic adaptation to:

- Terminology Consistency: Providing a glossary in the prompt ensures consistent translation of key terms

- Style Adaptation: Examples of formal vs. informal style guide the model's register selection

- Domain Specialization: In-context medical or legal terminology improves domain accuracy

- Brand Voice: Previous translations establish consistent brand personality

The mechanism underlying in-context learning remains an active research area, but practically, it enables powerful customization without expensive fine-tuning, making LLMs adaptable to diverse translation workflows.

Training Data Requirements

The quality and quantity of training data fundamentally determines NMT system performance. Unlike traditional software where code logic determines behavior, neural translation systems learn entirely from examples—the training corpus effectively becomes the "program" that determines translation quality, style, and capabilities.

Parallel Corpora: The Essential Resource

Parallel corpora—collections of texts with their human translations—form the primary training data for NMT systems. These aligned sentence pairs provide the direct supervision signal that teaches models to map source to target language. High-quality parallel data sources include:

- Official Sources: United Nations proceedings (UN Parallel Corpus), European Parliament proceedings (Europarl), EU press releases

- Religious Texts: Bible translations (1000+ languages), Quran translations, Book of Mormon

- Media: Subtitle databases (OpenSubtitles), news wire services, multilingual news sites

- Technical: Software localization files (i18n), technical documentation, academic papers

- Web: Wikipedia (through Wikidata alignment), web crawl data with language identification

Data requirements scale with language complexity and desired quality. Major language pairs (English-Spanish, English-French) benefit from billions of parallel sentences, enabling high-quality general-domain translation. Low-resource pairs may have only thousands or millions of sentences, resulting in quality gaps that transfer learning and multilingual training attempt to address.

Data Quality vs. Quantity Tradeoffs

While more data generally improves performance, quality matters significantly. Training on noisy parallel corpora—containing misaligned sentences, poor translations, or source-target mismatches—degrades model performance. Data cleaning pipelines typically include:

- Length Filtering: Removing sentence pairs with extreme length ratios (indicating misalignment)

- Language Identification: Verifying that source/target match expected languages (filtering code-switching or noise)

- Deduplication: Removing duplicate sentence pairs that could cause overfitting

- Quality Scoring: Using pre-trained models to estimate translation quality and filter low-confidence pairs

- Domain Filtering: Selecting data relevant to target translation domain

Research suggests that for high-resource languages, carefully curated data of 100M sentences may outperform 1B sentences of unfiltered crawl data. For low-resource languages, every quality sentence counts, and aggressive filtering may leave insufficient data for training.

Synthetic Data Generation

When parallel corpora are insufficient, synthetic data generation techniques expand training resources:

- Back-Translation: Translating monolingual target text into source language using an intermediate MT system, then training on the synthetic parallel pair. This technique has proven remarkably effective, improving BLEU scores by 2-4 points even for high-resource languages.

- Forward-Translation: Translating monolingual source text to target, creating parallel data from single-language resources.

- Noise Injection: Adding controlled noise (word deletion, substitution, reordering) to clean parallel data to improve robustness.

- LLM-Generated Examples: Using large language models to generate synthetic parallel examples for specific domains or terminology needs.

Domain-Specific Fine-Tuning

Generic NMT models trained on mixed-domain data often underperform on specialized content. Domain adaptation techniques improve performance on specific text types:

- Continued Training: Fine-tuning on in-domain parallel data (medical, legal, technical) to adapt vocabulary and style

- Mixed Fine-Tuning: Combining general and domain data to maintain broad capabilities while improving domain accuracy

- Adapter Layers: Small trainable modules (LoRA, adapters) that adapt a frozen base model to new domains with minimal parameters

- Terminology Constraints: Forcing specific term translations through constrained decoding or vocabulary constraints

Domain adaptation can improve BLEU scores by 5-15 points on specialized content, transforming marginally useful generic output into production-ready domain-specific translation. The key constraint is availability of quality in-domain parallel data, which often requires significant investment for niche domains.

State-of-the-Art Systems Comparison

The AI translation landscape features multiple mature, production-ready systems, each with distinct architectural approaches, strengths, and optimal use cases. Understanding these differences enables informed technology selection for specific requirements.

Google Translate: The Scale Leader

Google Translate represents the most widely deployed translation system globally, processing over 100 billion words daily across 243 supported languages. Its underlying Google Neural Machine Translation (GNMT) architecture, deployed in 2016, pioneered the transition to production-scale NMT.

Technical Architecture: GNMT employs an enhanced encoder-decoder with attention, using a deep LSTM network with 8 encoder and 8 decoder layers. The system uses a shared vocabulary of 32,000 wordpiece tokens across source and target languages, enabling mixed-language processing and zero-shot translation. Google's multilingual NMT approach trains a single model on multiple language pairs simultaneously, allowing transfer learning where high-resource language pairs improve low-resource translation quality.

Zero-Shot Translation: A notable GNMT innovation is direct translation between language pairs never explicitly seen during training (e.g., Japanese to Korean when trained only on Japanese-English and English-Korean). The model learns an intermediate "interlingua" representation that enables such zero-shot capabilities, dramatically expanding language coverage without requiring parallel corpora for all language pairs.

Quality Benchmarks: Google Translate achieves BLEU scores of 35-45 for major language pairs, with particularly strong performance on high-resource European and Asian languages. Real-time user feedback and continuous model updates maintain competitive quality. The system demonstrates robust performance on general-domain content (news, web pages, casual communication) with known limitations on literary, legal, and highly technical content.

Google Translate Technical Specifications

- • Architecture: GNMT with 8 encoder + 8 decoder LSTM layers

- • Vocabulary: 32,000 shared wordpiece tokens

- • Languages: 243 supported (written languages)

- • Daily Volume: 100+ billion words translated

- • API: RESTful with 1M characters/month free tier

- • Pricing: $20 per 1M characters (standard), $40 (premium)

- • Special Features: Document translation, website localization, real-time camera

DeepL: The Quality Leader

DeepL, developed by German company DeepL GmbH, has established a reputation for superior translation quality, particularly for European languages. Originally launched as Linguee (a bilingual dictionary search engine), DeepL's translation service emerged in 2017 and quickly gained recognition for producing more natural, fluent translations than competitors.

Neural Network Approach: DeepL's architecture details remain proprietary, but the company describes using "convolutional neural networks" (CNNs) rather than the more common recurrent or transformer architectures. This CNN approach may contribute to DeepL's noted strength in capturing local context and producing coherent phrase-level translations. The network is trained on the extensive Linguee corpus of high-quality human translations accumulated over years of dictionary service operation.

European Language Excellence: DeepL's strongest performance appears on European language pairs, likely reflecting both the company's German origins and the high-quality European Union document corpus in its training data. Blind tests consistently rate DeepL translations as more natural and accurate than competitors for German, French, Spanish, Italian, Dutch, and Polish. Performance on Asian and low-resource languages, while improving, historically lagged behind Google.

API and Enterprise Capabilities: DeepL offers a comprehensive REST API with support for text, document, and XML translation. Enterprise features include custom glossaries (up to 1,000 entries) for terminology enforcement, formality controls (formal/informal register selection), and detailed API analytics. The CAT tool integration ecosystem is extensive, with plugins for Trados, memoQ, Wordfast, and others.

Quality Benchmarks: Independent evaluations consistently place DeepL at or near the top for European language quality. On the WMT (Workshop on Machine Translation) benchmarks, DeepL achieves competitive or leading BLEU scores for Germanic and Romance language pairs. Professional translators report lower post-editing effort for DeepL output compared to Google Translate, indicating higher baseline quality.

Microsoft Translator: The Enterprise Choice

Microsoft Translator, part of Azure Cognitive Services, positions itself as the enterprise-focused translation solution with strong integration into Microsoft's productivity ecosystem and comprehensive customization capabilities.

Azure Cognitive Services Integration: Microsoft Translator integrates natively with the Azure cloud ecosystem, offering seamless deployment for enterprises already using Azure infrastructure. The service supports 100+ languages with neural machine translation as the default for all supported languages. Integration points include Office 365 (Word, Excel, PowerPoint), SharePoint, Teams, and Power Platform.

Custom Model Training: Microsoft's standout feature is Custom Translator, allowing enterprises to train domain-specific NMT models using their own parallel data. Organizations can upload 10,000+ parallel sentences and receive a custom model trained specifically on their terminology, style, and domain. This capability proves invaluable for specialized industries (healthcare, legal, manufacturing) where generic translation falls short.

Enterprise Features: Microsoft Translator offers features critical for business deployment: dictionary/phrase mapping for guaranteed terminology translation, profanity filtering, sentence breaking control, alignment data (showing source-target correspondence), and batch document processing. The Translator API supports text, document (Office and PDF formats), and speech translation with unified billing through Azure.

Meta NLLB: The Open Source Champion

Meta's No Language Left Behind (NLLB) project represents a significant contribution to open-source machine translation and low-resource language support. Released in 2022, NLLB aims to provide quality translation for 200 languages, with particular emphasis on African, South Asian, and Indigenous languages historically underserved by commercial translation technology.

200-Language Coverage: NLLB supports 200 languages—approximately twice the coverage of Google Translate at its launch. This includes many low-resource languages like Fulani (West Africa), Kikongo (Central Africa), and Tamazight (North Africa) that lack significant digital resources. The project combines extensive data collection with novel training techniques to achieve usable quality on these challenging pairs.

Open Source Approach: Unlike proprietary systems, NLLB models, training data, and evaluation benchmarks are publicly available under open licenses. This enables researchers, NGOs, and governments to build upon, customize, and deploy translation technology without vendor lock-in or ongoing API costs. The FLORES-200 evaluation benchmark, released alongside NLLB, provides standardized quality measurement across all 200 supported languages.

Technical Architecture: NLLB employs large-scale transformer architectures with sparse expert models (mixture of experts), enabling efficient multilingual training across diverse language families. The model uses specialized training techniques including self-supervised learning on monolingual data, back-translation, and multilingual training with language-aware routing.

NLLB Model Variants

- • NLLB-200 ( distilled 600M): 600M parameters, mobile-friendly

- • NLLB-200 (1.3B): 1.3B parameters, balanced quality/speed

- • NLLB-200 (3.3B): 3.3B parameters, highest quality

- • Architecture: Transformer with mixture of experts

- • Training Data: Parallel + monolingual for all 200 languages

- • License: CC-BY-NC (models), CC-BY-SA (data, benchmarks)

OpenAI GPT-4: The Context Champion

While not purpose-built for translation, GPT-4 demonstrates remarkable emergent translation capabilities as a byproduct of its general language understanding training. For certain translation scenarios—particularly those requiring deep context understanding, style adaptation, or creative translation—GPT-4 can outperform dedicated NMT systems.

General-Purpose LLM Translation: GPT-4 was not explicitly trained on translation objectives; its translation capabilities emerged from training on diverse multilingual internet text. Despite this indirect training, GPT-4 achieves competitive BLEU scores with NMT systems on major language pairs while offering unique advantages: superior handling of ambiguous context, ability to explain translation choices, and flexibility in adapting to arbitrary style or domain requirements through prompting.

Context Handling Superiority: GPT-4's primary advantage is its extended context window (128,000 tokens) enabling document-level translation with maintained coherence. Where sentence-level NMT systems lose track of terminology, pronoun references, and narrative flow across segments, GPT-4 processes entire documents, producing consistent, coherent translations that preserve authorial voice and maintain terminological consistency throughout lengthy texts.

Cost Considerations: GPT-4 translation costs significantly more than dedicated translation APIs—approximately $10-30 per million tokens compared to $20 per million characters for Google Translate Premium. For high-volume translation needs, this cost differential can be substantial. GPT-4 is best suited for lower-volume, high-value content where context sensitivity justifies the premium pricing.

Amazon Translate: The AWS Native

Amazon Translate provides neural machine translation as part of the AWS ecosystem, with tight integration into AWS services and competitive pricing for enterprise workloads.

AWS Integration: Amazon Translate integrates with S3 (batch document translation), Lambda (serverless translation workflows), Comprehend (sentiment-aware translation), and other AWS services. This integration enables sophisticated translation pipelines: automatically translating uploaded documents, triggering review workflows, or processing real-time streams.

Real-Time Capabilities: Amazon Translate emphasizes low-latency real-time translation, suitable for chat applications, customer service, and live content. The service supports both synchronous API calls for immediate needs and asynchronous batch processing for large-scale jobs.

Custom Terminology: Custom terminology dictionaries allow enterprises to enforce specific term translations, critical for maintaining brand consistency or technical accuracy. Custom terminology works across 75 supported languages with real-time application during translation.

| System | Languages | Strengths | Pricing |

|---|---|---|---|

| Google Translate | 243 | Scale, language coverage, zero-shot | $20/M chars |

| DeepL | 32 | European quality, fluency | $6.99-20/M chars |

| Microsoft Translator | 100+ | Enterprise, custom models | $10/M chars |

| Meta NLLB | 200 | Open source, low-resource | Free (self-hosted) |

| GPT-4 | 100+ | Context, creativity, adaptability | $10-30/M tokens |

| Amazon Translate | 75 | AWS integration, real-time | $15/M chars |

Quality Metrics and Evaluation

Evaluating translation quality requires both automatic metrics—enabling rapid, scalable assessment—and human evaluation frameworks providing authoritative quality judgments. Understanding these metrics enables meaningful comparison of systems and informed quality threshold setting for deployment decisions.

Automatic Metrics

BLEU: The Standard Benchmark

BLEU (Bilingual Evaluation Understudy), introduced by Papineni et al. (2002), remains the most widely cited automatic translation metric. BLEU measures n-gram precision between machine output and one or more human reference translations, comparing how many word sequences of length 1-4 match between candidate and reference.

The calculation process: (1) Count matching n-grams of each length (1-4) between candidate and reference, (2) Apply brevity penalty if candidate is shorter than reference (to discourage overly short outputs), (3) Calculate weighted geometric mean of modified precision scores, (4) Express as percentage (0-100, where 100 indicates perfect match with reference).

Interpretation: BLEU scores require context for meaningful interpretation. A score of 30-40 indicates acceptable quality for many applications; 40-50 approaches professional human quality for general content; above 50 suggests near-human parity. However, BLEU correlates imperfectly with human judgment and has known limitations: it rewards literal matches over valid paraphrases, ignores semantic equivalence expressed differently, and doesn't account for grammaticality or fluency directly.

Typical Scores by Domain:

- News content: 40-50 BLEU (high quality, consistent style)

- Technical documentation: 35-45 BLEU (terminology-dependent)

- Conversational/social media: 30-40 BLEU (informal, variable)

- Literary/creative: 20-30 BLEU (low, due to creative variation)

- Legal contracts: 25-35 BLEU (precision requirements, conservative scoring)

METEOR, chrF, and TER: Complementary Metrics

METEOR (Metric for Evaluation of Translation with Explicit ORdering):Addresses BLEU's limitations by incorporating synonymy, stemming, and paraphrase matching. METEOR aligns candidate and reference using flexible matching (exact, stem, synonym, paraphrase) and calculates F-mean (harmonic mean of precision and recall). METEOR correlates more strongly with human judgment than BLEU, particularly for content where valid variation exists.

chrF (Character n-gram F-score): Measures character n-gram F-score rather than word n-grams, making it particularly effective for morphologically rich languages where word boundaries are less meaningful. chrF correlates well with human judgment and has become a standard metric for low-resource language evaluation where word-level tokenization is challenging.

TER (Translation Edit Rate): Measures the minimum number of edits (insertions, deletions, substitutions, shifts) required to transform machine output into reference text, normalized by reference length. Lower scores indicate better quality. TER correlates with post-editing effort—professional translators' time to correct MT output—making it practically useful for estimating human-in-the-loop costs.

COMET: Neural Quality Estimation

COMET (Cross-lingual Optimized Metric for Evaluation of Translation), introduced in 2020, represents a paradigm shift in automatic evaluation. Unlike n-gram-based metrics, COMET uses pre-trained multilingual language models to assess semantic similarity between source, hypothesis, and reference. The metric learns from human quality judgments, training a neural model to predict Direct Assessment (DA) scores.

COMET demonstrates significantly higher correlation with human judgment than BLEU, particularly for high-quality outputs where BLEU's discrimination fails. State-of-the-art COMET models (COMET-22, COMETKiwi) achieve Kendall tau correlations above 0.6 with human rankings, compared to 0.3-0.4 for BLEU. For production quality assessment, COMET increasingly serves as the primary metric, with BLEU used for historical comparison and research standardization.

BERTScore: Embedding-Based Evaluation

BERTScore leverages pre-trained BERT embeddings to compute similarity between candidate and reference translations at the token level. By comparing contextual embeddings rather than surface forms, BERTScore captures semantic similarity that n-gram metrics miss. The metric computes precision, recall, and F1 based on cosine similarity between token embeddings, with optional IDF weighting to emphasize content words over function words.

BERTScore correlates strongly with human judgment across diverse language pairs and domains, offering particular advantage for high-quality translations where n-gram overlap is low but semantic equivalence is high. The metric is language-agnostic (works for any language with BERT or mBERT support) and can leverage domain-specific embeddings for specialized content evaluation.

Human Evaluation Frameworks

While automatic metrics enable rapid assessment, authoritative quality judgments require human evaluation. Professional frameworks standardize human assessment, ensuring reliability and comparability across evaluators and systems.

MQM: Multidimensional Quality Metrics

MQM, developed through collaboration between translation industry stakeholders and Google, provides a comprehensive framework for identifying, categorizing, and weighting translation errors. MQM defines a hierarchical taxonomy of error types:

- Accuracy: Addition, omission, mistranslation, untranslated text, entity errors

- Fluency: Grammar, spelling, punctuation, register, style, inconsistency

- Terminology: Inappropriate for context, inconsistent use

- Locale Convention: Date/time format, address format, currency, units

- Design/Markup: Links, formatting, placeholder issues

Each error receives a severity rating (Minor, Major, Critical) with associated penalties. MQM scores aggregate errors into a single quality score, enabling systematic comparison and pass/fail decisions. Major translation buyers (Google, Microsoft, Airbnb) use MQM-based evaluation for vendor quality monitoring and acceptance criteria.

DQF: Dynamic Quality Framework

DQF, maintained by TAUS (Translation Automation Users Society), provides industry- standardized quality evaluation with an emphasis on productivity metrics. DQF enables comparison of translation productivity across different workflows (human-only, MT+post-edit, MT-only) and establishes quality baselines for different content types.

DQF incorporates both automatic metrics (BLEU, edit distance) and human evaluation (error annotation, productivity measurement). The framework's online platform enables crowdsourced evaluation and industry benchmarking, providing reference points for quality expectations across domains and language pairs.

LQA: Language Quality Assessment

LQA represents the application of quality frameworks (typically MQM or custom taxonomies) to production translation assessment. LQA processes sample translations (typically 10-20% of volume) with trained linguists scoring against defined criteria. Results inform pass/fail decisions, vendor payment, and process improvement.

Enterprise LQA programs typically establish quality thresholds: scores above 95% indicate acceptable quality for publication; 85-95% require minor revision; below 85% trigger retranslation or significant post-editing. Thresholds vary by content criticality—marketing content may accept 90% while medical device documentation requires 98%+.

Quality by Domain

Translation quality varies dramatically by domain, with AI systems demonstrating strong performance on some content types while struggling with others. Understanding these patterns enables appropriate deployment decisions and workflow design.

High Suitability

- • News: BLEU 40-50, fluent, accurate

- • Technical: BLEU 35-45, terminological consistency

- • Product descriptions: BLEU 40-50, formulaic patterns

- • User-generated content: Acceptable at lower scores

- • Internal documentation: Cost-effective MT-first

Medium Suitability

- • Marketing materials: Requires post-editing

- • Technical manuals: Review required for accuracy

- • Training materials: Good first pass quality

- • Business correspondence: Context-dependent

Low Suitability (Human Required)

- • Legal contracts: Precision requirements, liability concerns

- • Medical documentation: Patient safety critical

- • Certified translations: Regulatory compliance required

- • Literary/creative works: Style, voice, nuance preservation

- • High-stakes marketing: Brand reputation sensitivity

These domain patterns reflect fundamental characteristics of AI translation: systems excel at pattern-matching in well-represented domains with clear terminological conventions, while struggling with creative variation, high-stakes precision, and contexts where errors carry significant consequences.

Enterprise Implementation Guide

Successful enterprise deployment of AI translation requires thoughtful integration across technical, workflow, and organizational dimensions. This section provides practical guidance for implementing AI translation at scale.

Integration Patterns

REST API Integration

Most AI translation services expose REST APIs accepting HTTP POST requests with source text and returning translated output. Standard integration patterns include:

- Synchronous Calls: For immediate translation needs (real-time chat, on-demand content), with typical latency 100-500ms depending on text length

- Batch Processing: For large-scale document translation, submitting multiple segments and receiving results asynchronously

- Streaming: Progressive translation delivery for long documents, enabling partial results while translation continues

API clients must implement retry logic with exponential backoff for transient failures, handle rate limiting (typically measured in characters or requests per minute), and manage authentication (API keys, OAuth tokens) securely. Request batching—combining multiple sentences in a single API call—reduces overhead and improves throughput.

SDK Implementation

Official SDKs (available for Python, JavaScript, Java, C#, Go, and others) simplify integration by handling authentication, retry logic, and response parsing. SDKs typically offer:

- Type-safe request/response objects

- Automatic connection pooling and keep-alive

- Built-in retry with configurable policies

- Connection pooling for high-throughput scenarios

- Streaming support for long documents

Batch vs. Real-Time Processing

Processing mode selection depends on use case requirements:

| Mode | Latency | Use Cases |

|---|---|---|

| Real-time | 100-500ms | Chat, live captioning, on-demand translation |

| Near-real-time | 1-10 seconds | Document preview, web page translation |

| Batch | Minutes to hours | Document archives, content localization workflows |

| Scheduled | Daily/weekly | Content sync, automated localization pipelines |

WebSocket Streaming

For real-time applications (live captioning, interpretation support, streaming content), WebSocket connections enable persistent bidirectional communication, reducing connection overhead and enabling server-push updates. WebSocket APIs typically segment input into chunks, streaming translation results as source content arrives, achieving end-to-end latency of 200-800ms per segment.

Customization Strategies

Custom Terminology Databases

Terminology consistency—ensuring specific terms translate consistently across all content—is critical for professional translation. Modern systems support custom terminology through:

- Glossaries: Simple term-translation mappings enforced during decoding, typically supporting 1,000-10,000 entries

- Do-Not-Translate Lists: Brand names, product codes, trademarks that should remain in source language

- Context-Aware Terminology: Different translations for the same source term depending on context/domain

- Adaptive Terminology: Systems that learn preferred translations from user corrections and apply them to future translations

Style Guide Adaptation

Beyond terminology, style guidelines govern tone, register, and linguistic conventions. LLM-based systems can adapt to style requirements through prompting:

- Formal vs. informal register specification

- Sentence length preferences (shorter for mobile, longer for documentation)

- Active vs. passive voice preferences

- Gender-neutral language requirements

- Regional variant preferences (e.g., European vs. Latin American Spanish)

Domain Adaptation

For specialized content, domain adaptation improves accuracy through:

- Custom Model Training: Fine-tuning on in-domain parallel data (requires 10,000+ sentence pairs)

- In-Context Learning: Providing domain examples in prompts for LLM-based systems

- Adapter Layers: LoRA and other parameter-efficient fine-tuning for domain specialization with minimal training

Brand Voice Training

For marketing and brand content, maintaining consistent voice across languages requires training on previously translated brand materials. Few-shot prompting with examples of preferred brand voice in the target language guides LLM output toward consistent tone and personality.

Workflow Integration

CAT Tool Integration

Computer-Assisted Translation (CAT) tools—Trados Studio, memoQ, Wordfast, Phrase— integrate AI translation through plugins and API connections. Integration patterns:

- Pre-translation: Automatically filling translation memories with MT suggestions before human work begins

- Interactive MT: Providing real-time suggestions as translators work

- MT Confidence Scoring: Highlighting low-confidence segments for focused human review

- Post-Editing Workflow: Structured processes for MT editing with productivity tracking

TMS Connectivity

Translation Management Systems (TMS)—Smartling, Crowdin, Lokalise, XTM—integrate AI translation as a core engine option. Enterprise TMS platforms offer:

- Multiple MT engine selection (Google, Microsoft, DeepL, custom)

- Automated pre-translation of new content

- Quality estimation and routing (MT-only vs. human post-edit vs. human translate)

- Cost tracking and optimization

- Continuous improvement loops (post-edits feeding back to MT training)

CMS Plugins

Content Management Systems integrate AI translation through plugins:

- WordPress: WPML, Polylang, Weglot with MT integration

- Drupal: Translation Management module with MT connectors

- Sitecore, Adobe Experience Manager: Enterprise connectors

- Headless CMS: API-based translation workflows

CI/CD Pipeline Integration

For software localization, AI translation integrates into CI/CD pipelines:

- Extracting new source strings from code repositories

- Automatic pre-translation of new content

- Human review assignment for modified strings

- Quality gates requiring minimum quality scores

- Automated deployment of approved translations

Cost Analysis

Per-Character Pricing Models

Traditional translation APIs price by characters processed:

| Provider | Price per 1M chars | Notes |

|---|---|---|

| DeepL API Free | $0 (500K chars/month) | Limited volume, no customization |

| DeepL API Pro | $6.99 | Unlimited, glossary support |

| Google Translate Basic | $20 | Standard quality |

| Google Translate Premium | $40 | Enhanced batch, custom models |

| Microsoft Translator | $10 | Custom model training extra |

| Amazon Translate | $15 | Volume discounts available |

Per-Token Pricing (LLMs)

LLM-based translation prices by tokens (subword units, typically 1 token ≈ 4 characters for English):

- GPT-4o: $2.50-10 per 1M tokens (input/output combined)

- Claude 3.5 Sonnet: $3-15 per 1M tokens

- Gemini 1.5 Pro: $3.50-7 per 1M tokens

LLM pricing is generally 2-5x higher than dedicated MT APIs, but may be justified for complex content requiring context handling or customization through prompting.

Volume Discounts

Enterprise agreements typically offer significant volume discounts:

- 1-100M characters/month: Standard pricing

- 100M-1B characters/month: 20-40% discount

- 1B+ characters/month: Custom pricing, 50%+ discount

Human-in-the-Loop Costs

Total cost of ownership must include human review for quality assurance:

- MT-only (no review): API cost only, suitable for gisting/internal use

- MT + spot-check (5-10%): API cost + minimal review, suitable for low-risk content

- MT + full post-edit: API cost + $0.03-0.08/word for professional editing

- Human translation: $0.10-0.30/word (baseline comparison)

Even with full post-editing, MT-first workflows typically reduce costs 30-50% compared to human-only translation while maintaining equivalent quality.

Technical Challenges and Solutions

Handling Linguistic Complexity

Natural languages present diverse structural challenges that complicate automatic translation. Understanding these complexities and their solutions enables better system selection and quality expectation setting.

Morphologically Rich Languages

Languages like Finnish, Turkish, Hungarian, and Arabic exhibit extensive inflection— single words may encode what requires multiple words in English. A Finnish noun can have thousands of forms depending on case, number, and possession. This richness challenges translation systems trained primarily on morphologically simpler languages.

Solutions: Character-level and subword tokenization (BPE, SentencePiece) enable representation of morphological variants without requiring full-word vocabulary entries. Morphological analysis pre-processing can normalize words to their base forms for translation, then re-inflect output. Multilingual training on diverse language families improves morphological handling across the board.

Word Order Differences

Languages vary dramatically in word order: English uses Subject-Verb-Object (SVO), Japanese uses Subject-Object-Verb (SOV), and Arabic allows flexible ordering with case marking. These structural differences require reordering during translation, challenging for systems with limited context windows.

Solutions: Attention mechanisms inherently learn alignment and reordering from parallel data. Transformer architectures with self-attention handle reordering effectively by allowing each position to attend to all others. For distant reordering, larger context windows (enabled by efficient attention variants like linear attention or sparse attention) maintain coherence across long-distance dependencies.

Honorifics and Formality

Languages like Japanese, Korean, and Thai encode social hierarchy through complex honorific systems. Japanese has distinct verb forms, vocabulary, and sentence endings depending on speaker-listener relationship and context. Choosing appropriate formality requires understanding social context that may not be explicit in source text.

Solutions: Systems like DeepL offer explicit formality controls (formal/ informal). LLMs can be prompted with context about speaker relationships to select appropriate register. Domain adaptation on formal vs. informal corpora trains models for specific register requirements.

Gender and Number Agreement

Many languages require grammatical agreement in gender and number between nouns, adjectives, articles, and verbs. When translating from English (which largely lacks grammatical gender) to Spanish or French, the system must infer gender for ambiguous terms, sometimes resulting in errors or bias.

Solutions: Gender-neutral translation options avoid forced binary choices. Context-aware models use surrounding information to infer likely gender. Explicit gender marking in source (when available) can guide correct agreement.

Context and Disambiguation

Homonym Resolution

Homonyms—words with multiple meanings—present persistent challenges. "Bank" can mean financial institution or river edge; "run" has hundreds of senses. Sentence-level NMT often fails to select correct translations without sufficient context.

Solutions: Extended context windows (thousands of tokens) enable document-level disambiguation. LLMs with broad knowledge can infer likely senses from world knowledge. Domain adaptation prioritizes domain-appropriate senses.

Coreference Handling

Coreference—tracking what pronouns refer to across sentences—is essential for coherent translation. When "she" refers to "the CEO mentioned in paragraph one," maintaining that reference (or using the correct gendered form in languages with grammatical gender) requires document-level understanding.

Solutions: Document-level models process extended contexts to track entities. Coreference resolution pre-processing can annotate references before translation. LLMs with large context windows handle coreference implicitly through self-attention across the full document.

Discourse Coherence

Beyond sentence-level accuracy, professional translation requires discourse coherence—logical flow, appropriate transition markers, and maintained rhetorical structure across paragraphs. Sentence-level MT treats each sentence independently, potentially disrupting cohesive devices.

Solutions: Document-level NMT models extend the context window to capture inter-sentence dependencies. Discourse-aware training objectives optimize for coherence metrics. LLMs naturally handle discourse through their extended context and holistic understanding.

Low-Resource Languages

The majority of the world's 7,000+ languages lack sufficient digital resources for training high-quality translation systems. Low-resource languages face data scarcity, limited computational research, and quality gaps perpetuating digital inequality.

Data Scarcity Solutions

Addressing data scarcity requires creative approaches:

- Community Collection: Projects like Common Voice and Tatoeba crowdsource parallel sentences from speakers

- Religious Text Translation: Bible translations exist for 1,000+ languages, providing foundational parallel corpora

- Document Digitization: OCR and translation of printed materials in endangered languages

- Synthetic Data: Back-translation from monolingual text using pivot languages

Transfer Learning Approaches

Transfer learning enables quality improvement without extensive parallel data:

- Multilingual Training: Training on multiple languages simultaneously allows sharing of representations; learning from high-resource pairs improves low-resource translation

- Massively Multilingual Models: Systems supporting 100+ languages (mBART, mT5, NLLB) learn cross-lingual transfer during pre-training

- Pivot Translation: Translating through an intermediate high-resource language (e.g., Swahili → English → French) when direct parallel data is unavailable

Community Contributions

Meta's NLLB project exemplifies community-driven low-resource language support, partnering with native speakers for data collection, evaluation, and deployment. Similar grassroots efforts through Mozilla Common Voice, Wikipedia translation drives, and academic documentation projects expand resources for underserved languages.

Domain Adaptation

Generic translation models underperform on specialized content. Domain adaptation techniques customize systems for specific fields (medical, legal, technical, financial) where terminology and style differ significantly from general language.

In-Domain Data Collection

The gold standard for domain adaptation is training on domain-specific parallel corpora. Sources include:

- Translated technical documentation from manufacturers

- Legal document translations from courts and law firms

- Medical literature translations (PubMed, research papers)

- Financial reports and regulatory filings with official translations

- Software localization files (strings, UI text)

Fine-Tuning Strategies

Fine-tuning adapts pre-trained models to specific domains:

- Continued Pre-training: Further training on domain monolingual data to adapt vocabulary and style before parallel fine-tuning

- Supervised Fine-Tuning: Training on domain parallel data with standard NMT objectives

- Mixed Fine-Tuning: Combining general and domain data to prevent catastrophic forgetting of general capabilities

Adapter Layers (LoRA)

Low-Rank Adaptation (LoRA) and related techniques add small trainable adapter layers to frozen base models, enabling domain specialization with minimal parameter updates. This approach is computationally efficient (enabling adaptation on consumer hardware), storage efficient (separate small adapters per domain), and avoids catastrophic forgetting by preserving base model weights.

LoRA adapters with 10-100M parameters can achieve comparable domain adaptation to full fine-tuning of 600M+ parameter models, democratizing domain customization.

Security, Privacy, and Compliance

Enterprise AI translation involves transmitting potentially sensitive content to external services, raising legitimate security and privacy concerns. Understanding provider practices and compliance frameworks enables risk-appropriate deployment decisions.

Data Security

Encryption in Transit and at Rest

All major AI translation providers enforce TLS 1.2+ encryption for data in transit, protecting content during API transmission. Enterprise plans may offer dedicated encryption certificates or private connectivity (AWS PrivateLink, Azure Private Endpoint) eliminating public internet exposure.

Data at rest—logs, cached results, training data—is typically encrypted using AES-256 or equivalent standards. Enterprise agreements may specify data residency (storage in specific geographic regions) for compliance with data localization requirements.

Zero-Retention Policies

Provider retention policies vary significantly:

- DeepL Pro: Zero-retention policy for Pro API—content is not stored or used for model improvement

- Google Cloud: Content stored temporarily for service operation, with data processing terms specifying limited use

- Microsoft Azure: Configurable retention with options for no logging

- OpenAI: API data not used for model training by default (since March 2023), with enterprise agreements offering additional controls

Enterprise agreements should explicitly specify retention terms, training data exclusion, and audit rights to ensure compliance with organizational security policies.

On-Premise and Private Cloud Options

For maximum data control, several options eliminate third-party data exposure:

- On-Premise Deployment: Microsoft Translator and other providers offer containerized on-premise deployment for air-gapped environments

- Private Cloud: Deploying open-source models (NLLB, OPUS-MT, Marian) on private infrastructure

- Self-Hosted LLMs: Running open-source LLMs (Llama, Mistral) for translation without external API calls

These options trade convenience (automatic updates, elastic scaling) for control, requiring internal infrastructure and expertise for model management.

Compliance Requirements

GDPR Considerations

European Union GDPR requirements affect AI translation when processing personal data:

- Data Processing Agreements (DPAs): Required with translation providers, specifying data handling, subprocessor disclosure, and security measures

- Data Residency: EU data should remain in EU regions; providers offer EU-specific endpoints

- Right to Deletion: Procedures for requesting data removal from provider systems

- Privacy Impact Assessments: Evaluating translation processing for high-risk personal data scenarios

HIPAA for Medical Content

Healthcare organizations translating protected health information (PHI) must ensure HIPAA compliance:

- Business Associate Agreements (BAAs) with translation providers

- Audit logs of all translation activities

- Access controls limiting translation to authorized personnel

- De-identification options for translation before re-identification

Few translation providers offer explicit HIPAA compliance; healthcare organizations typically opt for on-premise or private cloud deployment to maintain control.

SOC 2 Type II and ISO 27001

Enterprise translation buyers typically require security certifications:

- SOC 2 Type II: Audited controls for security, availability, processing integrity, confidentiality, and privacy

- ISO 27001: International standard for information security management systems

- ISO 27017/27018: Cloud-specific and personal data protection extensions

Major cloud providers (AWS, Azure, Google Cloud) maintain these certifications for their translation services. Smaller providers or self-hosted solutions require organizational certification of the deployment environment.

IP Protection

Confidential Document Handling

Legal, financial, and strategic documents require careful handling:

- Zero-retention provider selection for highly sensitive content

- On-premise deployment for trade secrets and litigation materials

- Segmented workflows (general MT for public content, human-only for confidential)

- NDA requirements for human post-editors handling sensitive MT output

Competitive Intelligence Risks

Some organizations worry that translation providers could mine submitted content for competitive intelligence. While major providers explicitly prohibit this in terms of service and enterprise contracts, risk-averse organizations may prefer:

- Self-hosted models eliminating third-party exposure

- Segmented translation (generic content via API, sensitive content on-premise)

- Enterprise agreements with explicit data use restrictions and audit rights

The practical risk appears low given provider reputational incentives and contractual protections, but organizational risk tolerance varies.

Performance Optimization

Latency Reduction

Translation latency affects user experience in real-time applications and throughput in batch processing. Several techniques optimize response times:

Model Quantization

Quantization reduces model precision from 32-bit floating point to 16-bit or 8-bit integers, decreasing model size and increasing inference speed with minimal quality degradation. Techniques include:

- FP16 (half-precision): ~2x speedup, minimal quality loss

- INT8 quantization: ~4x speedup, <1% BLEU degradation with calibration

- Dynamic quantization: Runtime conversion for flexible deployment

Distillation Techniques

Knowledge distillation trains smaller "student" models to replicate larger "teacher" model behavior. A 60M-parameter distilled model may achieve 90% of a 600M-parameter model's quality with 10x inference speed, enabling real-time deployment on edge devices.

Edge Deployment

Deploying models on edge devices (mobile phones, embedded systems) eliminates network latency entirely:

- Mobile-optimized models: NLLB-200 distilled 600M, MarianMT compact models

- On-device inference: 100-500ms latency regardless of network conditions

- Privacy preservation: No data leaves the device

- Offline capability: Translation without internet connectivity

Caching Strategies

Translation caching eliminates redundant API calls:

- Exact match cache: Identical source segments return cached translations

- Fuzzy match cache: Similar segments (from translation memory) with edit distance

- Result caching: Storing API responses to avoid repeated calls

- TTL (time-to-live): Cache expiration for evolving terminology

Scalability

Load Balancing and Auto-Scaling

For high-volume applications, cloud-native architectures provide elastic scaling:

- Load balancers distribute requests across multiple API endpoints or model instances

- Auto-scaling provisions additional capacity during traffic spikes

- Regional deployment reduces latency for global user bases

- Containerization (Kubernetes) enables efficient resource utilization

Rate Limiting Strategies

API rate limits require careful management:

- Token bucket algorithms for smooth request distribution

- Request batching to maximize throughput within rate limits

- Circuit breakers preventing cascade failures when limits exceeded

- Queue management prioritizing critical vs. background translation jobs

Cost Optimization

Strategic approaches reduce translation costs without compromising quality:

- Model Selection by Use Case: Using lower-cost APIs for internal/gisting content, premium APIs for customer-facing materials

- Batch Processing: Queuing non-urgent translation during off-peak hours for potential discounted rates

- Hybrid Workflows: AI-first translation with targeted human review, rather than blanket human post-editing

- Volume Commitments: Negotiating enterprise discounts for predictable high volumes

- Translation Memory: Maximizing reuse of previously translated content to reduce API calls

Cost optimization often involves 80/20 analysis: identifying the 20% of content that justifies premium processing while optimizing costs for the remaining 80%.

Use Case Analysis

AI translation suitability varies dramatically by use case. This section provides a structured framework for evaluating appropriateness and designing appropriate workflows.

High-Suitability Use Cases

These scenarios are ideal for AI translation deployment:

Internal Documentation

Knowledge bases, internal wikis, SOPs, and employee communications are ideal for MT-first workflows. Quality requirements are moderate (95%+ accuracy acceptable), volume is often high, and speed matters more than literary perfection. Review can be spot-checked rather than comprehensive.

Customer Support Chat

Real-time chat translation enables multilingual support without hiring native speakers for every language. Systems like Google Translate and Unbabel specialize in conversational translation, with quality sufficient for resolving routine inquiries. Human agents review when sentiment detection flags frustration or confusion.

Website Localization (First Pass)

Large-scale website translation benefits enormously from AI-first approaches. Marketing sites, product catalogs, and help centers with thousands of pages achieve rapid deployment with MT pre-translation followed by human refinement of high-traffic pages. This approach reduces time-to-market from months to weeks.

Product Descriptions

E-commerce product catalogs follow formulaic patterns that AI handles well. Specifications, dimensions, materials, and features translate consistently. Style adaptation through custom terminology ensures brand-appropriate terminology across thousands of SKUs.

User-Generated Content

Reviews, comments, forum posts, and social media require translation at scale where perfect accuracy is less critical than gist understanding. AI translation enables monolingual moderators to oversee multilingual communities and supports analytics across language barriers.

Medium-Suitability Use Cases

Marketing Materials (Post-Edit Required)

Marketing content requires cultural adaptation beyond literal translation. AI provides a solid first draft, but transcreation (creative adaptation) requires human expertise to preserve emotional impact, humor, and cultural resonance. MT + post-edit workflows balance efficiency with quality.

Technical Manuals (Review Required)

Technical accuracy is critical—incorrect translations of safety warnings or procedures could cause harm. AI translation with subject matter expert review offers a cost-effective approach. Terminology consistency is essential; custom glossaries ensure correct technical term translation.

Training Materials

E-learning content requires accuracy and clarity but offers flexibility for iterative improvement. AI translation enables rapid course deployment with learner feedback identifying sections requiring refinement.

Low-Suitability Use Cases

Legal Contracts

Legal documents require precision where ambiguity creates liability. AI translation may handle routine clauses but requires attorney review for enforceability. Court submissions, contracts, and regulatory filings typically warrant human translation with legal expertise.

Medical Documentation

Patient safety depends on accurate medical translation. Drug labels, clinical trial documentation, and patient instructions require certified medical translators. AI may support preliminary understanding but should not be sole source for medical decisions.

Certified Translations

Immigration documents, academic transcripts, and legal certifications require sworn translators with official credentials. AI translation is not accepted for these purposes regardless of quality.

Literary and Creative Works

Literature requires preserving voice, rhythm, cultural references, and emotional nuance—capabilities where AI remains limited. Machine translation of poetry or literary prose typically fails to achieve acceptable quality, serving at best as a rough draft for human authors.

High-Stakes Marketing

Brand-differentiating campaigns, Super Bowl commercials, and luxury brand positioning require transcreation—creative adaptation that AI cannot perform. The cost of translation errors (brand damage) outweighs translation cost savings.

Decision Framework: When evaluating use case suitability, consider: (1) Error cost—what happens if translation is imperfect? (2) Volume—is scale sufficient to justify MT investment? (3) Speed requirements—is rapid turnaround essential? (4) Content formulaicness—does the content follow predictable patterns? High error cost + high formulaicness = MT + human review; High error cost + low formulaicness = human translation; Low error cost = MT-only acceptable.

Implementation Best Practices

Pilot Project Design

Successful AI translation adoption begins with well-designed pilots:

- Select Representative Content: Pilot with content reflecting your full diversity (different domains, styles, and language pairs)

- Define Success Metrics: Establish BLEU/COMET targets, human evaluation criteria, and productivity measures before starting

- Control Group Comparison: Compare MT + post-edit against human-only translation for equivalent content

- Stakeholder Involvement: Include translators, reviewers, and end-users in evaluation to capture diverse perspectives

- Iterative Refinement: Use pilot learnings to adjust terminology, style guides, and workflows before scaling

Quality Threshold Setting

Establish clear quality thresholds for workflow routing:

| Quality Score | Action |

|---|---|

| 95%+ (COMET) | Publish without review (low-risk content) |

| 85-95% (COMET) | Light post-edit (spot-check high-confidence sections) |

| 70-85% (COMET) | Full post-edit required |

| Below 70% | Retranslate from scratch (human or different MT) |

Human Review Integration

Effective human-AI collaboration maximizes both efficiency and quality:

- Post-Editing Guidelines: Train reviewers on light vs. full post-editing—light editing fixes only clear errors; full editing improves style and flow

- Confidence-Based Routing: Use MT confidence scores to route high-confidence content to light review, low-confidence to full review

- Interactive MT: Provide translators with real-time MT suggestions they can accept, modify, or ignore based on quality

- Productivity Measurement: Track words-per-hour for MT + post-edit vs. human translation to quantify efficiency gains

Continuous Improvement Loops

Post-editing creates valuable training data for system improvement:

- Feedback Collection: Capture post-edit distances, error types, and translator ratings for each segment

- Retraining Cycles: Periodically retrain custom models on accumulated post-edited data (quarterly or semi-annually)

- Terminology Updates: Expand custom glossaries based on frequently corrected terms

- Error Analysis: Categorize recurring error patterns to guide targeted improvements (domain adaptation, style guide refinement)

Vendor Selection Criteria

Evaluate translation providers systematically:

- Language Coverage: Do they support your required language pairs with adequate quality?

- Customization: Can you train custom models, enforce terminology, and adapt to domains?

- Integration: Do they offer SDKs, REST APIs, and CAT tool connectors compatible with your stack?

- Security: Do they meet your compliance requirements (GDPR, HIPAA, SOC 2)?

- Pricing: Are costs predictable and scalable with volume discounts?

- Support: Do they offer enterprise support, SLAs, and dedicated account management?

Internal Capability Building

Long-term success requires internal expertise:

- MT Literacy Training: Educate translators and project managers on MT capabilities, limitations, and best practices

- Post-Editing Skills: Develop specialized post-editing competencies distinct from traditional translation

- Technical Integration: Build internal capabilities for API integration, workflow automation, and custom model training

- Quality Management: Establish internal quality frameworks and evaluation capabilities

Future Technical Developments

AI translation continues evolving rapidly. Key technical developments on the horizon will reshape capabilities and deployment patterns.

Multimodal Translation

Multimodal AI—combining text, image, and audio understanding—enables new translation scenarios:

- Image Translation: OCR + translation + text rendering for instant translation of signs, menus, and documents via camera

- Video Subtitling: Automated speech recognition + translation + subtitle timing for video localization at scale

- Visual Context: Using image content to disambiguate text translation (e.g., "bank" translated differently for financial document vs. river scene)

Real-Time Speech Translation

Speech-to-speech translation enables natural multilingual conversation:

- End-to-end models combining ASR (automatic speech recognition), MT, and TTS (text-to-speech)

- Latency reduction to sub-2-second end-to-end translation

- Voice preservation maintaining speaker characteristics in target language

- Streaming processing for natural turn-taking in conversation

Products like Meta's SeamlessM4T and Google's Translatron represent early implementations, with rapid improvement expected as models scale and optimize.

Domain-Specific Model Proliferation

The future will see specialized models for specific domains:

- Medical translation models trained on clinical corpora and medical ontologies

- Legal translation models incorporating jurisdictional knowledge

- Technical models for specific industries (automotive, aerospace, software)

- Literary models trained on creative writing and stylistic adaptation

These specialized models will outperform general-purpose systems in their domains, potentially achieving expert-level accuracy for restricted content types.

Quantum Computing Implications

While still speculative, quantum computing may eventually impact translation:

- Quantum machine learning algorithms potentially discovering more efficient representations

- Quantum annealing for optimization problems in decoding and alignment

- Significant impact likely 10+ years away given current quantum hardware limitations

Edge AI Deployment

On-device translation is rapidly improving:

- Mobile NPUs (neural processing units) enabling efficient local inference

- Model compression techniques achieving usable quality in 100MB models

- Privacy-preserving translation without network transmission

- Offline capability for travelers and field workers

Edge deployment will democratize access, enabling translation in bandwidth-constrained environments and addressing privacy concerns for sensitive communications.

Conclusion

AI translation technology has achieved remarkable technical maturity, evolving from laboratory curiosity to enterprise-grade infrastructure. Neural machine translation systems demonstrate near-human quality for general content across major language pairs, while large language models offer unprecedented flexibility and context handling. The technology stack—from transformer architectures to attention mechanisms, subword tokenization to domain adaptation—provides a robust foundation for diverse translation applications.