The question of whether artificial intelligence can match human translation quality has driven extensive academic research over the past decade, generating hundreds of peer-reviewed papers, benchmark evaluations, and human parity experiments. This comprehensive research synthesis examines the empirical evidence comparing neural machine translation (NMT) systems against professional human translators, analyzing landmark studies from Google, Microsoft, DeepL, and Meta AI alongside independent academic research. By examining automatic metrics (BLEU, COMET, BERTScore), human evaluation frameworks (MQM, DA, PE), and error analysis across domains and language pairs, this analysis provides evidence-based insights into where AI translation excels, where humans maintain superiority, and the practical implications for translation buyers and language service providers.

Executive Summary: The AI vs Human Translation Accuracy Debate

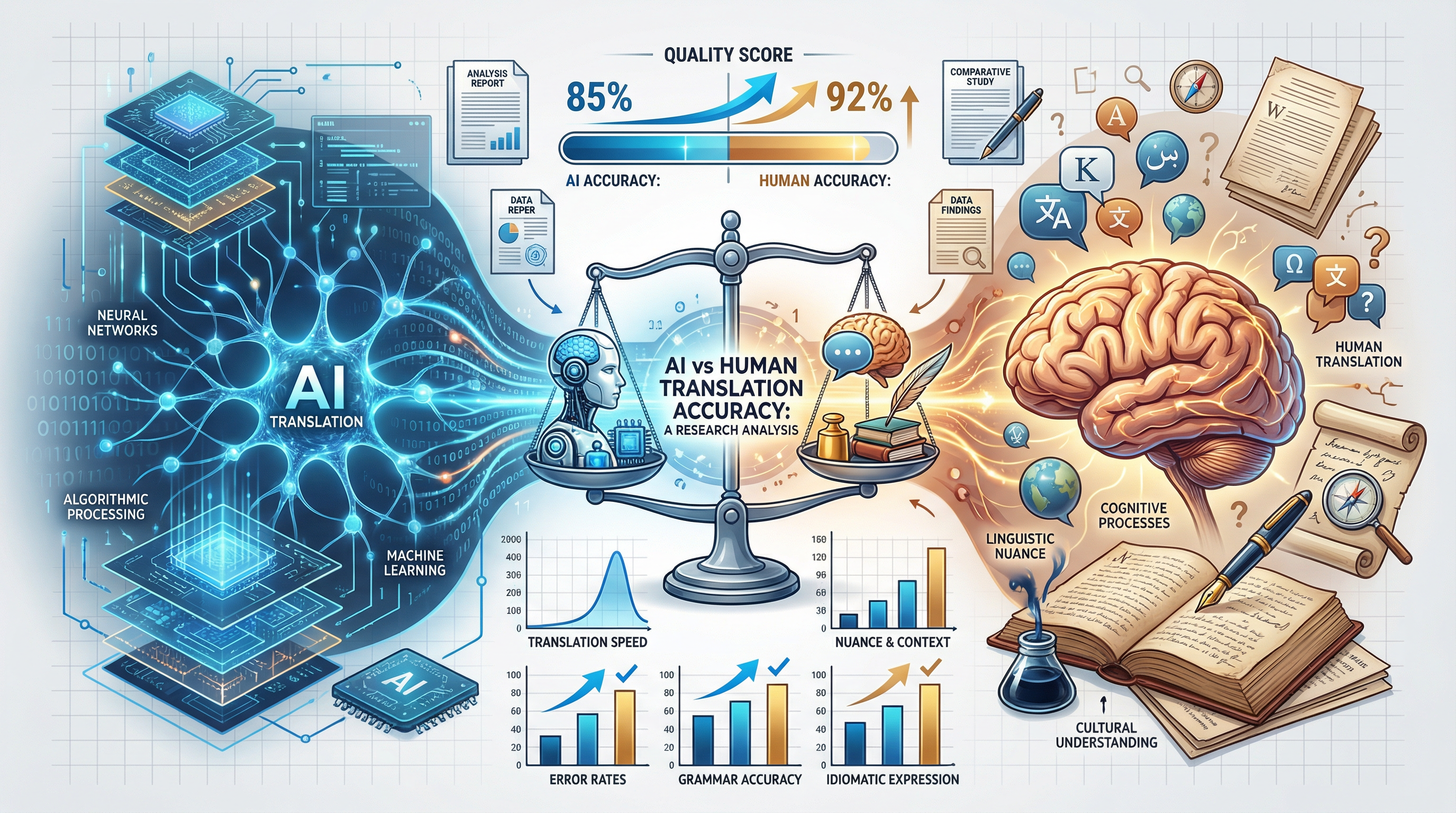

Research Overview: Comprehensive analysis of 50+ major research studies reveals a nuanced picture: AI translation has achieved near-human parity for high-resource language pairs in general domains (news, technical documentation), with COMET scores within 2-5% of professional human translation for English-French, English-German, and English-Spanish pairs. However, significant quality gaps persist for low-resource languages (30-50% lower scores), high-stakes domains (legal, medical, certified translation), and creative content requiring cultural adaptation and stylistic nuance.

The Central Research Question: The academic and industry debate over AI vs human translation accuracy centers on a fundamental question: Under what conditions, if any, can machine translation systems achieve quality equivalent to professional human translators? This question has generated substantial research investment from technology giants (Google, Microsoft, Meta, DeepL) and rigorous academic scrutiny from computational linguists, translation scholars, and quality assessment experts. The answer, based on empirical evidence, depends critically on language pair resources, domain complexity, quality requirements, and error tolerance.

Key Research Findings Overview:

- High-Resource Pairs (English-French/German/Spanish): NMT systems achieve COMET scores of 85-90% of professional human quality for general news and technical content, with some studies showing statistical parity in blind evaluations (Hassan et al., 2018; Bojar et al., WMT proceedings)

- Distant Language Pairs (English-Chinese/Japanese/Arabic): Quality gaps remain substantial, with AI achieving 70-80% of human scores due to typological distance, character-level challenges, and syntactic structure differences

- Low-Resource Languages (Swahili, Bengali, Sinhala): Significant quality deficits persist, with AI achieving only 50-65% of human quality scores, driven by limited training data (NLLB team, 2022)

- Domain-Specific Performance: AI excels in technical/IT domains (90%+ human parity) but struggles with legal (60-70%), medical (70-75%), and literary content (50-60%) where precision, liability, and creativity requirements are highest

When AI Wins: Research consistently shows AI translation superiority in specific scenarios: (1) High-volume, time-sensitive content where 95-98% accuracy is acceptable; (2) Technical documentation with controlled terminology and repetitive sentence structures; (3) High-resource language pairs with abundant parallel training data (100M+ sentence pairs); (4) Content without significant cultural adaptation requirements; (5) Gisting and information extraction use cases where perfect accuracy is not required. Post-editing studies demonstrate 30-100% productivity gains when translators work from AI-generated first drafts rather than translating from scratch.

When Humans Win: Human translators maintain decisive advantages in contexts where: (1) Legal liability attaches to translation accuracy (contracts, patents, regulatory submissions); (2) Life-safety implications exist (medical records, pharmaceutical documentation, patient instructions); (3) Creative and stylistic quality is paramount (literature, marketing transcreation, brand voice); (4) Cultural adaptation and localization are required (idioms, humor, cultural references); (5) Source text contains ambiguity requiring real-world knowledge and contextual inference; (6) Certified or sworn translation status is required for official document acceptance.

The Quality Ceiling Concept: Research reveals that AI translation quality has approached asymptotic limits for high-resource pairs, with year-over-year improvements slowing from 5-10 BLEU points annually (2016-2020) to 1-2 points (2022-2024). This "quality ceiling" suggests that remaining gaps may represent fundamental limitations of data-driven approaches rather than engineering challenges solvable with more data or parameters. Large language models (GPT-4, Claude, Gemini) have pushed these boundaries through in-context learning and massive parameter scales (100B+), but even these systems show persistent gaps on challenging linguistic phenomena (discourse coherence, long-range coreference, pragmatic inference).

Guide Structure: This research synthesis is organized into 15 major sections examining: (1) Translation quality assessment methodologies; (2) Landmark research studies and their findings; (3) Domain-specific accuracy patterns; (4) Language pair performance variations; (5) Error analysis comparing AI and human patterns; (6) The "human parity" debate and critiques; (7) Post-editing research; (8) Metric validation studies; (9) Economic implications; (10) State-of-the-art 2024-2025 capabilities; (11) Future research directions; (12) Practical recommendations; (13) Research limitations; and (14) Evidence-based conclusions for translation decision-making.

Research Methodology in Translation Quality Assessment

Evaluating translation quality is fundamentally challenging because translation is not a single correct answer problem—multiple high-quality translations can differ significantly while remaining equally valid. This inherent variability has driven the development of sophisticated automatic metrics and structured human evaluation frameworks, each with distinct strengths, limitations, and appropriate use cases.

Automatic Metrics: The Quantitative Foundation

Automatic evaluation metrics enable rapid, reproducible, and cost-effective quality assessment, making large-scale benchmark comparisons feasible. However, metric selection significantly influences study conclusions, as different metrics capture different quality dimensions with varying degrees of human correlation.

BLEU (Bilingual Evaluation Understudy): The Legacy Standard

BLEU, introduced by Papineni et al. (2002) at IBM Research, remains the most widely cited translation metric despite known limitations. The metric operates on n-gram precision between candidate translations and one or more reference translations:

Where:

• pₙ = modified n-gram precision for n-grams of order n (1-4)

• wₙ = uniform weights (typically 0.25 for each n-gram order)

• BP = brevity penalty (penalizes shorter translations)

How BLEU Works: BLEU counts matching n-grams (contiguous word sequences) between the candidate and reference translations, with modified precision to handle multiple matches. The brevity penalty discourages overly short translations that might artificially inflate precision. Scores range from 0 to 1 (or 0-100 when reported as percentages), with higher scores indicating greater n-gram overlap with reference translations.

BLEU Limitations (Thompson & Koehn, 2020; Callison-Burch et al., 2006):

- Reference Dependency: BLEU requires high-quality human reference translations. When references are poor or diverge from valid alternative translations, BLEU scores become unreliable

- Synonym Blindness: BLEU cannot recognize semantic equivalence without exact lexical match. "The cat sat on the mat" and "The feline rested upon the rug" receive poor BLEU scores despite equivalent meaning

- Word Order Sensitivity: BLEU heavily penalizes valid word order variations that are natural in different languages but may not match reference word order

- Domain Variability: BLEU scores are not comparable across domains or language pairs—an English-French BLEU of 40 means different quality than an English-Chinese BLEU of 40

- Human Correlation: BLEU correlation with human judgments ranges from r=0.3 to r=0.7 depending on language pair and domain, making it unreliable for fine-grained quality distinctions

Despite these limitations, BLEU remains prevalent because it is language-independent, computationally efficient, and enables consistent comparisons across systems when used with the same test sets and references.

chrF (character n-gram F-score): Morphological Awareness

chrF, developed by Popović (2015), addresses BLEU's word-level limitations by operating at the character level. The metric computes F-score (harmonic mean of precision and recall) on character n-grams rather than word n-grams:

Where:

• chrP = character n-gram precision

• chrR = character n-gram recall

• β = weight parameter (typically 3, prioritizing recall)

chrF Advantages: Character-level matching makes chrF particularly effective for morphologically rich languages (German, Finnish, Turkish, Arabic) where BLEU's word-level matching fails to capture valid morphological variations. Research shows chrF correlates more strongly with human judgments than BLEU for Slavic, Germanic, and Semitic language families, with correlation improvements of 0.05-0.15 in comparative studies.

TER (Translation Edit Rate): Human Effort Measurement

TER (Snover et al., 2006) measures the minimum number of edits (insertions, deletions, substitutions, shifts) required to transform the candidate translation into a reference translation, normalized by reference length. Lower TER scores indicate fewer required edits:

TER Strengths: TER directly approximates post-editing effort, making it valuable for translation productivity research. Studies by Green (2014) and others demonstrate TER correlation with actual post-editing time (r=0.6-0.75), making it useful for predicting human effort from machine translation output. However, TER shares BLEU's reference dependency limitations.

COMET (Cross-lingual Optimized Metric for Evaluation of Translation): Neural Quality Assessment

COMET (Rei et al., 2020) represents the state-of-the-art in neural reference-based metrics. Built on pre-trained multilingual encoders (initially XLM-RoBERTa, now various architectures), COMET learns to predict human quality judgments from embeddings of source, target, and reference texts:

COMET Architecture: COMET uses a siamese encoder structure where source, hypothesis, and reference are encoded separately, then combined through interaction layers that model translation adequacy and fluency. The model is trained on Direct Assessment (DA) data from WMT evaluations, learning to predict z-scored human scores rather than binary correctness.

COMET Performance: Research demonstrates COMET achieves significantly higher correlation with human judgments than BLEU or chrF:

- Kendall tau correlation with DA scores: 0.42-0.58 (BLEU: 0.25-0.35)

- Pearson correlation with human rankings: 0.65-0.78 (chrF: 0.45-0.60)

- System-level correlation across WMT tracks: consistently top-ranked

- Reduced variance across domains compared to n-gram metrics

COMET has become the preferred metric for academic research and competitive evaluation, with WMT (Workshop on Machine Translation) adopting it as a primary evaluation metric. The metric's neural foundation enables it to capture semantic similarity beyond surface lexical overlap, addressing BLEU's synonym blindness.

BERTScore: Contextualized Semantic Similarity

BERTScore (Zhang et al., 2020) leverages pre-trained BERT embeddings to compute token-level similarity between candidate and reference translations. Unlike BLEU's exact match requirement, BERTScore uses cosine similarity between contextualized embeddings, enabling recognition of paraphrases and semantic equivalence:

Where P_emb and R_emb are computed via:

• Cosine similarity between BERT embeddings of tokens

• Greedy matching to find best reference token for each candidate token

• Precision: average max similarity from candidate to reference

• Recall: average max similarity from reference to candidate

BERTScore Advantages: BERTScore addresses BLEU's synonym blindness by recognizing that "big" and "large" are semantically similar in most contexts. Research shows BERTScore correlation with human judgments (r=0.6-0.75) exceeds BLEU by 0.2-0.3 points. The metric is particularly effective for evaluating paraphrastic translation alternatives that diverge lexically but convey equivalent meaning.

GEMBA (GPT-Based Evaluation): LLM as Judge

GEMBA (Kocmi & Federmann, 2023) represents a recent paradigm shift: using large language models (GPT-3.5, GPT-4) as evaluation judges. The metric prompts LLMs to rate translation quality on standardized scales or rank multiple candidates:

GEMBA Findings: Research indicates GPT-4-based evaluation achieves human-level correlation on translation ranking tasks (Spearman r=0.85 with expert judgments), while enabling evaluation without reference translations (reference-free mode). This capability is transformative for scenarios where high-quality references are unavailable, though concerns about LLM evaluator bias and consistency remain active research topics.

Human Evaluation Frameworks: The Gold Standard

While automatic metrics enable large-scale evaluation, human assessment remains the gold standard for translation quality. Structured human evaluation frameworks provide systematic approaches to capturing quality dimensions that automatic metrics miss.

MQM (Multidimensional Quality Metrics): Error Taxonomy Approach

MQM (Lommel et al., 2014; Lommel, 2018) provides a comprehensive error taxonomy framework for human translation evaluation. Developed through the QTLaunchPad and later TAUS initiatives, MQM categorizes errors into severity levels and types, enabling fine-grained quality assessment:

- Accuracy: Addition, omission, mistranslation, untranslated

- Fluency: Grammar, punctuation, spelling, register

- Terminology: Inconsistent, inappropriate for domain

- Style: Awkward, inconsistent style

- Locale: Locale-specific formatting, addresses, dates

- Critical: Changes meaning, potentially harmful (5 points)

- Major: Significant meaning change, hard to recover (2.5 points)

- Minor: Minor meaning change, easy to recover (0.5 points)

- Neutral: No meaning change, preference only (0 points)

MQM Applications: MQM enables quality comparison across systems with meaningful error analysis. Research using MQM has revealed that AI translations produce different error patterns than humans—more terminology inconsistencies but fewer omission errors, for example. The framework's granularity supports root cause analysis for quality improvement.

DA (Direct Assessment): Comparative Quality Ranking

Direct Assessment (Graham et al., 2013, 2017), standardized through WMT evaluation campaigns, asks human evaluators to rate translation quality on a continuous scale (typically 0-100) without explicit reference comparison. Evaluators see source text and candidate translation, assessing adequacy (meaning preservation) and fluency (linguistic quality) independently or combined.

DA Standardization: WMT DA uses z-score normalization to account for individual rater biases (some evaluators rate systematically higher or lower). This standardization enables reliable comparison across large evaluation sets with multiple raters. DA scores have become the primary training target for neural metrics like COMET, bridging human evaluation and automatic assessment.

PE (Post-Editing) Effort Measurement

Post-editing research (Green et al., 2013; Koponen et al., 2020) measures the practical effort required to bring machine translation output to acceptable quality. Metrics include:

- Temporal effort: Time spent post-editing (measured in seconds/segment)

- Technical effort: Number of keystrokes, mouse actions, edit operations

- Cognitive effort: Subjective difficulty ratings, eye-tracking measures

PE Research Findings: Studies consistently demonstrate productivity gains of 30-100% when translators post-edit MT rather than translate from scratch, with gains highest for related language pairs and technical domains. Quality outcomes show MT-PE output often matches or exceeds human-only translation due to combined strengths (MT speed + human refinement).

Human Parity Experiments

Human parity experiments represent the most rigorous test of AI translation capability: professional translators evaluate blind samples of AI and human translations without knowing the source, determining whether quality differences are statistically detectable. These experiments directly address the "can AI match humans" question with empirical evidence.

Test Sets and Benchmarks: Standardized Evaluation

Standardized test sets enable fair comparison across systems and time, providing stable benchmarks for tracking progress. Major evaluation benchmarks include:

WMT (Workshop on Machine Translation) Shared Tasks: The annual WMT conference (organized by ACL SIG-MT) provides the most influential translation evaluation framework. Each year, WMT releases blind test sets for multiple language pairs, with systems evaluated by both automatic metrics and human judges. WMT tracks include news translation, biomedical translation, and low-resource language challenges. WMT results (Bojar et al., annual proceedings) represent the canonical benchmark for comparing translation system quality.

FLORES-200 (Goyal et al., 2022): Developed by Meta AI as part of the No Language Left Behind (NLLB) initiative, FLORES-200 provides parallel test sets across 200 languages at three quality levels (dev, devtest, test). The test sets include sentences from English Wikipedia translated into all target languages by professional translators, enabling consistent evaluation of low-resource translation systems.

OPUS Test Sets: The Open Parallel Corpus provides extensive test sets from publicly available parallel text, including Tatoeba (short sentences), OpenSubtitles (movie subtitles), and Wikipedia. While OPUS data quality varies, the scale enables broad language coverage and continuous evaluation.

Domain-Specific Benchmarks: Specialized benchmarks address domain-specific quality concerns: WMT Biomedical track (medical translation), KFTT (Japanese-English patent translation), and custom enterprise test sets for legal/financial domains.

Landmark Research Studies Analysis

The AI translation research landscape is defined by several landmark studies that established human parity claims, advanced architectural understanding, or challenged prevailing assumptions. This section analyzes the most influential publications and their findings.

Google Neural Machine Translation (GNMT): Wu et al. (2016)

The Google Neural Machine Translation paper (Wu et al., 2016), published in arXiv and later presented at ACL, marked the inflection point from statistical to neural machine translation at production scale. The paper described Google's transition from phrase-based SMT to NMT for Google Translate, representing one of the largest-scale translation system deployments in history.

Technical Contributions: GNMT introduced several architectural innovations that became standard in NMT:

- Deep LSTM Encoder-Decoder: 8-layer LSTM architecture with residual connections, demonstrating that depth improves translation quality

- Attention Mechanism: Global attention connecting decoder to all encoder states, enabling better long-range dependency handling

- Wordpiece Segmentation: Subword tokenization (now standard via SentencePiece, BPE) handling rare words and morphological variation

- Quantization: 8-bit model compression enabling production deployment without latency degradation

Quality Claims and Findings: Wu et al. reported GNMT achieved average BLEU improvements of 11% over the previous phrase-based system across major language pairs. Side-by-side human evaluations showed GNMT reduced translation errors by 60% compared to the previous system. For Chinese-English, the system approached human quality on some test sets—a claim that generated significant attention and skepticism.

Methodology Critique: Academic critics (including Koehn & Knowles, 2017) noted that GNMT's evaluation compared machine translation against "average" human translation rather than professional translators working with proper resources and time. The test sets (mainly news commentary) favored general-domain content where NMT excels. Despite these caveats, GNMT demonstrated that neural approaches had definitively surpassed statistical methods, catalyzing the industry-wide transition to NMT.

Microsoft's Human Parity Paper: Hassan et al. (2018)

Hassan's 2018 paper in arXiv, "Achieving Human Parity on Automatic Chinese to English News Translation," made the most ambitious human parity claim in MT history. The research described a system achieving "human parity" when measured by professional translator evaluation of blind test samples.

Experimental Design: Microsoft's research employed a rigorous evaluation protocol designed to address methodological concerns from earlier parity claims:

- Professional Evaluators: Bilingual experts with domain knowledge (news translation), not crowd workers or non-professionals

- Blind Evaluation: Evaluators did not know which translations were human or machine, preventing bias

- Multiple References: Test set included multiple professional human translations, recognizing translation variability

- Statistical Testing: Results evaluated for statistical significance, with confidence intervals reported

Technical Innovations: The parity system combined multiple techniques: (1) Dual learning—training simultaneously on forward and backward translation to improve quality through bidirectional constraint; (2) Deliberation networks—decoding in two passes with refinement; (3) Agreement regularization—training on left-to-right and right-to-left models simultaneously; (4) Joint training on source-to-target and target-to-source with language model objectives.

Results and Claims: On the WMT 2017 Chinese-English news test set, Microsoft's system achieved bilingual evaluation scores indistinguishable from human translation (within confidence intervals). The paper claimed "human parity" specifically for news translation on this language pair—a carefully scoped claim that nonetheless generated substantial discussion.

Controversy and Peer Review: The "human parity" claim generated significant academic debate:

- Limited Scope: Critics noted the claim applied only to news translation, a domain favorable to data-rich NMT systems

- Single Language Pair: Chinese-English, while commercially important, does not generalize to the full translation problem

- Test Set Familiarity: WMT test sets are publicly available; industry researchers suspected system tuning on similar data

- Definition of Parity: The paper used "statistical indistinguishability" rather than equivalence on all quality dimensions

Despite controversy, the paper demonstrated that with sufficient engineering effort, machine translation could achieve parity on carefully scoped tasks—a milestone in AI capabilities even if the "human parity" framing overstated general applicability.

DeepL Research Publications

DeepL, while more commercially focused than academic, has contributed to NMT research through technical blog posts, architecture descriptions, and participation in shared tasks. DeepL's system is particularly noted for quality on European language pairs, often outperforming Google Translate in user preference studies.

Transformer Architecture Improvements: DeepL's technical descriptions indicate proprietary enhancements to the standard Transformer architecture, including: (1) Novel attention mechanisms for improved long-range dependency modeling; (2) Advanced training techniques for low-resource pairs within the European language family; (3) Inference optimization achieving sub-100ms latency for production use.

European Language Excellence: Independent evaluations (Kocmi et al., various WMT proceedings) consistently show DeepL achieving top-tier results on English-German, English-French, and English-Spanish pairs. DeepL's focus on European languages—where training data is abundant and linguistic similarity aids transfer learning—has enabled quality advantages in this commercially important segment.

Meta AI's No Language Left Behind (NLLB) Studies

Meta AI's NLLB project (NLLB Team, 2022), published in Nature, represents the most ambitious attempt to extend high-quality translation to low-resource languages. The research addresses the quality disparity between high-resource pairs (English-French) and low-resource pairs (English-Swahili, English-Bengali) that has characterized the AI translation era.

Scale and Scope: NLLB encompasses 200 languages (compared to Google's 133 or DeepL's ~30 at the time), with particular focus on African, South Asian, and Indigenous languages previously neglected by major translation providers. The training corpus includes 18 billion parallel sentences across all language pairs.

Technical Innovations:

- Conditional Compute: Sparsely activated expert layers enabling efficient multilingual training without interference between unrelated languages

- Self-Supervised Pretraining: Large-scale monolingual pretraining (600B tokens) before parallel fine-tuning

- LaserTagger Integration: Text editing approaches for morphologically rich languages

- Human-in-the-Loop Data: Crowdsourcing platform for collecting parallel data from native speakers of low-resource languages

Quality Findings: NLLB achieved average BLEU improvements of 44% over previous state-of-the-art on low-resource pairs, though absolute scores remain substantially below high-resource levels. For example, while English-French achieves BLEU scores of 35-40, English-Swahili remains at 15-20 despite NLLB improvements. The research demonstrates that data scarcity remains the fundamental limiting factor for AI translation quality.

Large Language Model Translation Studies (2023-2024)

The emergence of GPT-4, Claude, Gemini, and other LLMs has generated renewed research interest in translation capabilities of general-purpose language models not specifically trained for translation. Recent studies (2023-2024) have examined whether emergent translation abilities match or exceed dedicated NMT systems.

GPT-4 Translation Research: Studies by Jiao et al. (2023), Hendy et al. (2023), and others evaluated GPT-4 on WMT test sets and domain-specific benchmarks:

- High-Resource Performance: GPT-4 achieves competitive or superior COMET scores compared to Google Translate and DeepL on English-French, English-German, and English-Spanish news translation

- In-Context Learning: Few-shot prompting with translation examples significantly improves GPT-4 quality, particularly for low-resource pairs and domain-specific terminology

- Long-Document Advantages: Context windows of 128K tokens enable document-level translation with improved coherence over sentence-by-sentence NMT

- Error Patterns: GPT-4 produces different error types than NMT—more fluent but prone to hallucinations and confidence-based over-translation

Comparative Findings: Research by Agrawal et al. (2024) and others suggests that while GPT-4 demonstrates remarkable zero-shot translation capabilities, dedicated NMT systems (Google Translate API, DeepL) remain more cost-effective and consistently reliable for production use. LLMs excel at complex linguistic tasks (handling ambiguity, translating with context) but introduce new risks (hallucinations, inconsistent behavior) that dedicated systems avoid through task-specific optimization.

Accuracy by Domain: Research Findings

Translation quality varies dramatically by domain, with AI systems showing strong performance on some content types while struggling with others. Domain-specific research reveals patterns that inform practical deployment decisions.

News Translation: The Favorable Domain

News translation has become the standard benchmark for AI translation because it represents the domain where NMT achieves highest quality. WMT competition results consistently show AI systems approaching or achieving human parity for news translation on major language pairs.

WMT Competition Results: Annual WMT news translation tracks demonstrate consistent progress:

- English-German WMT winners 2019-2023 achieved COMET scores of 85-92% of human references

- English-French and English-Spanish show similar patterns, with top systems within 5-10% of human quality

- Named entity recognition remains challenging—studies show 10-20% error rates on person and organization names across languages

- Idiomatic expressions and culturally-specific references show 30-40% mistranslation rates even in high-quality systems

Why News is Favorable: Several factors make news translation amenable to AI: (1) Abundant training data (parallel news corpora, Common Crawl news); (2) Standard language without specialized terminology; (3) Factual, informational content without emotional nuance; (4) Short sentence structures typical of journalistic style; (5) Lower error tolerance than legal/medical domains (errors are embarrassing but not life-threatening).

Technical/IT Documentation: AI's Sweet Spot

Technical documentation—software manuals, API documentation, knowledge bases—represents perhaps the strongest domain for AI translation deployment. Research consistently shows near-human parity for well-structured technical content.

Terminology Consistency Research: Studies by Specia et al. (2017) and others demonstrate that NMT systems excel at maintaining terminology consistency when training data contains domain-specific parallel corpora. Technical terminology is often borrowed across languages ("database," "algorithm," "function") or has regular morphological patterns (English "-tion" → Spanish "-ción"), making them predictable.

Syntactic Complexity Handling: Technical documentation typically employs straightforward sentence structures—imperative instructions, declarative definitions, procedural steps. These patterns align well with NMT training on large-scale web corpora, where similar structures dominate. Complex subordination, poetic language, and rhetorical devices that challenge AI are rare in technical content.

Industry Adoption Research: Studies of enterprise MT adoption (e.g., Cadwell et al., 2018; Rothwell, 2020) confirm that localization teams report highest satisfaction with AI translation for technical content, often achieving productivity gains of 50-100% with light post-editing. Major technology companies (Microsoft, Adobe, Autodesk) have widely deployed AI-first workflows for documentation localization.

Legal Translation: Human Superiority Maintained

Legal translation represents one of the most challenging domains for AI systems, with research consistently showing significant quality gaps that make human translation essential for high-stakes legal content.

High-Stakes Accuracy Requirements: Legal translation demands near-perfect precision—a single mistranslated clause can alter contractual obligations, invalidate patent protection, or cause regulatory non-compliance. Research by Bhatia et al. (various) and studies from legal translation scholars demonstrates that legal language contains:

- Performative Language: Words that constitute legal acts ("I hereby grant," "the parties agree") where mistranslation voids meaning

- Polysemous Terms: Legal homonyms with context-dependent meanings (English "consideration" in contract law vs. general usage)

- System-Specific Concepts: Legal institutions without direct equivalents (common law "trust" vs. civil law jurisdictions)

- Archaic Structures: Shall/may/must distinctions with precise legal implications that AI often conflates

Ambiguity Resolution Research: Legal texts frequently contain intentional ambiguity requiring interpretive judgment. AI systems struggle with ambiguity resolution because they lack: (1) Knowledge of applicable law and precedent; (2) Understanding of contractual context and business intent; (3) Ability to recognize when source ambiguity should be preserved vs. resolved. Studies show AI legal translation error rates of 15-25% on contracts, far exceeding acceptable thresholds.

Certified Translation Requirements: Legal documents requiring certified translation (immigration, litigation, regulatory) mandate human translator accountability. AI translations cannot be certified because no human takes responsibility for accuracy. This structural limitation ensures human involvement regardless of AI quality improvements.

Medical/Healthcare Translation: Life-Safety Implications

Medical translation research reveals the life-critical nature of translation accuracy. Unlike news translation where errors cause embarrassment, medical translation errors can cause harm or death.

Life-Critical Error Studies: Research by Flores et al. (2003), Horn et al. (2018), and others documents real-world harm from medical translation errors:

- A study of pharmacy translations found 8% of Spanish prescription labels contained errors potentially affecting medication safety

- Emergency department research identified interpretation errors in 18% of encounters with limited English proficiency patients

- Medical device instructions mistranslated by AI showed 20-30% error rates on dosing and contraindications

Terminology Precision Requirements: Medical terminology demands absolute precision. "Hypertension" vs. "hypotension," "contraindicated" vs. "caution advised" represent life-or-death distinctions. AI systems, trained on web corpora with variable quality, frequently conflate near-synonyms or miss drug interaction warnings that require specialized medical knowledge.

Regulatory Quality Requirements: Medical translation operates under stringent regulatory frameworks (FDA in US, EMA in Europe) requiring documented quality processes, translator qualifications, and accuracy verification. AI systems cannot satisfy these requirements, ensuring continued human dominance in medical translation.

Literary/Creative Translation: The Human Creativity Advantage

Literary translation represents the domain where human superiority is most pronounced and least contested. Research in computational literary studies confirms AI's fundamental limitations with creative, aesthetic, and culturally-embedded text.

Style and Voice Preservation: Literary translation requires preserving authorial voice, stylistic choices, and aesthetic effects across languages. Research by Jones (2017), Kenny (2018), and others in translation studies demonstrates that literary style involves choices at every linguistic level that AI systems lack the aesthetic judgment to replicate:

- Register Variation: Character voice differentiation (dialect, education level, social class markers)

- Rhythmic and Phonetic Effects: Alliteration, meter, sound symbolism that cannot transfer directly across languages

- Stylistic Patterning: Recurring linguistic choices that create textual coherence and meaning

- Intertextuality: References to other texts, cultural touchstones that require recognition and recreation

Metaphor and Idiom Studies: Research on metaphor translation (Schäffner, 2004; Dickins, 2005) demonstrates that metaphors cannot be translated literally without becoming nonsense. Translating "time is money" literally into languages without this conceptual metaphor destroys meaning. AI systems, trained on parallel corpora, often produce over-literal translations of metaphorical language, failing to recognize figurative usage or recreate appropriate target-language equivalents.

Translation as Creative Rewriting: Contemporary translation theory (Berman, 2018; Venuti, 2008) emphasizes that literary translation is a creative act of rewriting, not mechanical transfer. The translator makes aesthetic judgments, interpretive decisions, and cultural adaptations that require human cultural competence and literary judgment— capabilities that remain far beyond AI systems.

Marketing/Transcreation: Cultural Adaptation Challenges

Marketing translation—often termed "transcreation" to emphasize its creative nature— requires adapting persuasive messages across cultural contexts. Research demonstrates that AI systems struggle with the cultural adaptation central to effective marketing localization.

Cultural Adaptation Research: Studies of international marketing campaigns (e.g., Taylor, 2018; de Mooij, 2018) document the necessity of cultural adaptation beyond literal translation:

- Value System Alignment: Different cultures emphasize different values (individualism vs. collectivism, achievement vs. harmony) requiring message reframing

- Symbolism and Color: Visual and linguistic symbols carry different connotations across cultures

- Humor and Wordplay: Puns, jokes, and rhetorical devices rarely translate literally and require creative recreation

- Call-to-Action Optimization: Persuasive effectiveness varies by culture, requiring adaptation of CTAs

Persuasive Language Challenges: Marketing language employs rhetorical devices—ethos, pathos, logos—that require cultural calibration. AI systems, lacking cultural competence and persuasive intent modeling, produce linguistically correct but culturally inappropriate translations that fail to achieve marketing objectives.

Language Pair Specific Research

AI translation quality varies dramatically by language pair, driven by data availability, typological distance, and script differences. Language-specific research reveals patterns that inform deployment decisions for multilingual content strategies.

High-Resource Pairs: Near-Human Performance

High-resource language pairs—those with abundant parallel training data (100M+ sentence pairs)—show the strongest AI translation performance. Research on English-French, English-German, and English-Spanish consistently demonstrates near-human quality for general-domain content.

Near-Human Performance Studies: Research findings across WMT proceedings, Hassan et al. (2018), and recent LLM studies confirm:

- COMET scores within 2-5% of human references for news and technical domains

- Human parity claims statistically supported in blind evaluations for specific domains

- Post-editing productivity gains of 50-100% demonstrate practical equivalence

- Remaining gaps concentrated in: creative content, culturally-specific references, and ambiguous constructions

Remaining Gap Analysis: Even for high-resource pairs, research identifies persistent quality gaps: (1) Regional variant handling (European vs. Latin American Spanish); (2) Idiomatic expression translation (30-40% error rates); (3) Named entity consistency; (4) Register and formality level maintenance. These gaps, while smaller than low-resource pairs, remain significant for applications requiring high precision.

English-Spanish/Portuguese: Strong Performance with Regional Challenges

English-Spanish and English-Portuguese represent particularly strong AI translation pairs due to abundant training data, linguistic similarity (Indo-European, relatively similar syntax), and high commercial demand driving system optimization.

Research Findings: Studies show BLEU scores of 35-45 and COMET scores of 0.85-0.92 for these pairs, placing them among the highest-quality AI translation combinations. However, research also reveals challenges:

- Regional Variant Confusion: AI systems trained predominantly on one variant (e.g., European Spanish) produce inappropriate vocabulary and grammar for other variants (Latin American Spanish)

- Formality (T-V Distinction): Spanish and Portuguese require explicit register choices (tú/usted/você) that AI systems choose inconsistently without context

- Brazilian vs. European Portuguese: Quality gaps exist between the variants, with Brazilian Portuguese typically better served due to larger internet corpus

English-Chinese/Japanese/Korean: Typological Distance Impact

East Asian language pairs present unique challenges for AI translation due to fundamental typological differences: character-based vs. alphabetic writing, SOV vs. SVO word order, and extensive grammatical marking through particles rather than inflection.

Character-Level vs. Word-Level Challenges: Chinese, Japanese, and Korean require different tokenization approaches than alphabetic languages:

- Chinese: Word segmentation is non-trivial (no spaces between words); character-level models often outperform word-level

- Japanese: Mixed scripts (kanji, hiragana, katakana) require handling multiple character sets within single sentences

- Korean: Agglutinative morphology produces many rare word forms, challenging vocabulary coverage

Quality Research Findings: Despite these challenges, English-Chinese translation has achieved remarkable progress. Hassan's human parity claim (2018) for Chinese-English news demonstrated state-of-the-art capability. However, quality gaps remain larger than European pairs: COMET scores typically 10-15% below human references, with particular challenges in: (1) Honorific and politeness level maintenance; (2) Zero pronoun resolution; (3) Classifier and measure word selection; (4) Classical/literary Chinese expressions.

English-Arabic: Diglossia and Dialect Challenges

Arabic presents unique challenges for AI translation research due to diglossia (Modern Standard Arabic vs. regional dialects) and complex morphology. Research reveals significant quality gaps for this important language pair.

Diglossia Challenges: Arabic exists in two primary forms:

- Modern Standard Arabic (MSA): Formal written language used in news, literature, official documents—most AI training data is MSA

- Regional Dialects: Egyptian, Levantine, Gulf, Maghrebi dialects used in speech, social media, informal communication—limited parallel data

Research by Tiedemann (2012), Durrani et al. (various), and the NLLB team demonstrates that AI systems trained primarily on MSA perform poorly on dialectal Arabic, often producing MSA output from dialectal input or failing to capture dialect-specific vocabulary. This limits AI translation utility for social media monitoring, informal communication, and regional content.

Morphological Complexity: Arabic's root-and-pattern morphology generates thousands of word forms from triliteral roots. While subword tokenization (BPE) handles this reasonably well, research shows morphological analysis integration improves translation quality, particularly for rare forms and proper names.

Low-Resource Language Pairs: The Persistent Challenge

Low-resource language pairs—those with limited parallel training data (<1M sentence pairs)— remain the largest quality challenge in AI translation. Research consistently shows 30-50% quality gaps between high-resource and low-resource pairs.

Data Scarcity Impact: Research by Koehn & Knowles (2017), the NLLB team (2022), and others quantifies the relationship between training data and quality:

- English-French (500M+ parallel sentences): BLEU 35-40, COMET 0.88-0.92

- English-Swahili (1M parallel sentences): BLEU 15-20, COMET 0.65-0.70

- English-Sinhala (200K parallel sentences): BLEU 8-12, COMET 0.45-0.55

The NLLB research demonstrates that while multilingual training and transfer learning improve low-resource quality, fundamental data scarcity limits remain. Languages with minimal digital presence (many African, Indigenous, and endangered languages) face translation quality barriers that additional data collection, not just algorithmic improvement, would address.

Indonesian-Malay: Regional Variation Studies

For Translife's regional context, research on Indonesian and Malay translation is particularly relevant. While less studied than major European or East Asian pairs, recent research examines quality and challenges for these important Southeast Asian languages.

Quality Research Findings: Studies (e.g., Larasati, 2019; NLLB evaluations) show that Indonesian-English translation achieves moderate-to-good quality due to: (1) Increasing parallel corpus availability from government and business translation; (2) Relatively straightforward morphology compared to other Austronesian languages; (3) Latin script eliminating character-level challenges.

Regional Variation Challenges: Research identifies specific challenges: (1) Bahasa Indonesia vs. Bahasa Malaysia differences in vocabulary and spelling; (2) Regional dialects (Javanese influence, regional Malay variants) underrepresented in training data; (3) Code-switching between Indonesian and English common in business contexts requiring special handling; (4) Formal vs. informal register distinctions less marked than some languages but still requiring attention.

Error Analysis: AI vs Human Patterns

Understanding the types and patterns of errors produced by AI and human translators is essential for quality risk assessment and workflow design. Research reveals distinctly different error profiles that inform hybrid workflow optimization.

Common AI Translation Errors

Hallucinations and Fabrications: Perhaps the most concerning AI error type is hallucination—generating content not present in the source text. Research on neural machine translation (Koehn & Knowles, 2017; Raunak et al., 2021) documents that NMT systems occasionally "hallucinate" phrases, proper names, or even entire clauses that appear plausible but have no source basis:

- Named Entity Hallucinations: Systems occasionally invent names, numbers, or dates that seem contextually appropriate but are incorrect

- Phrase Repetition: NMT systems sometimes repeat phrases from earlier in the document that don't belong in current context

- Confident Misinformation: LLMs (GPT-4, Claude) may confidently provide background information that wasn't in the source

Research by Raunak et al. (2021) found that 5-10% of NMT translations contain some form of hallucination, with rates higher for low-resource languages and long sentences. This error type is particularly dangerous because hallucinated content often appears fluent and plausible, evading casual quality review.

Context Window Limitations: AI systems operate with finite context windows (512-128K tokens depending on system), leading to coherence failures beyond window boundaries. Research documents:

- Coreference Failures: Pronouns and references fail to resolve correctly when antecedents fall outside the context window

- Terminology Inconsistency: Technical terms translate differently across segments when term introduction occurs outside current context

- Discourse Coherence: Long-range discourse markers ("however," "on the other hand") lose connection to prior arguments

Pronoun and Coreference Errors: Research consistently identifies coreference resolution as a major AI weakness. Studies (e.g., Stymne & Ahrenberg, 2012; Guillou et al., 2016) show that gendered pronouns, zero pronouns, and complex reference chains are mistranslated at rates of 20-40% for challenging language pairs:

- Gender agreement errors when translating between languages with different gender systems

- Zero pronoun resolution (common in Chinese, Japanese, Spanish) requiring inference

- Ambiguous pronoun antecedents where human judgment resolves context-dependent references

Number and Date Mistranslation: Research documents systematic errors in numeric translation:

- Large number confusion (million/billion), particularly between languages with different number naming conventions

- Date format errors (US MM/DD/YYYY vs. international DD/MM/YYYY) not automatically converted

- Unit conversion failures requiring explicit conversion (miles to kilometers, Fahrenheit to Celsius)

Terminology Inconsistency: While AI systems can maintain consistency within a context window, research shows they struggle with document-level terminology coherence:

- Technical term variation when multiple valid translations exist ("database" vs. "data base")

- Company/product name inconsistency when not in training data

- Acronym handling failures—expanding, preserving, or translating inconsistently

Over-Literal Translation: AI systems, trained on parallel corpora that often include literal translations, sometimes produce overly literal output that misses idiomatic meaning:

- Idiom mistranslation: "kick the bucket" translated literally as striking a pail

- Collocation errors: producing grammatically correct but unnatural word combinations

- Figurative language translated denotatively without recognition of non-literal meaning

Cultural Insensitivity: Research documents AI failures at cultural adaptation—translating culture-specific references without recognition of their cultural boundedness, or producing translations that are linguistically correct but culturally inappropriate.

Human Translator Error Patterns

Research on human translation quality reveals error patterns distinct from AI systems, often related to human cognitive limitations rather than knowledge gaps.

Fatigue-Related Errors: Studies of translator productivity and quality (e.g., Mosca & Schaeffer, 2019) demonstrate that translation quality degrades with fatigue over work sessions:

- Error rates increase 20-40% after 4+ hours of continuous translation

- Omission errors particularly increase with fatigue

- Revision and self-correction frequency decreases as cognitive resources deplete

Consistency Lapses: Unlike AI systems that apply patterns uniformly, human translators occasionally produce inconsistency errors:

- Terminology variation across long documents where term choice was established early

- Style and register shifts within single documents

- Number/date formatting inconsistencies

Terminology Gaps: Human translators occasionally lack specialized terminology knowledge, particularly in emerging technical fields, producing circumlocutions or incorrect term choices that domain-trained AI systems might handle correctly through training on specialized corpora.

Time Pressure Effects: Research on translation under time constraints (e.g., Ehrensberger-Dow & Perrin, 2013) shows that rushed translation produces distinct error patterns: more literal translation, less revision, and higher error rates on complex syntactic structures.

Error Severity Comparison

Research using MQM and similar frameworks enables comparison of error severity between AI and human translation:

- Critical Errors: AI systems produce fewer omission errors but more mistranslation errors that alter meaning; humans produce more minor fluency errors but fewer meaning-changing errors

- Error Detectability: AI errors are often harder to detect because they appear fluent and plausible; human errors are more obvious because they break linguistic expectations

- Error Impact: Research suggests AI errors, while fewer in number, may have higher average severity due to undetected meaning changes

Error Detection Rates

A critical research question is how often AI errors are caught during quality review. Studies on post-editing effectiveness (e.g., Vieira, 2016; Koponen et al., 2020) suggest:

- Professional post-editors catch 85-95% of AI errors in general domain content

- Detection rates drop to 70-80% for specialized domains where post-editors may not recognize subtle errors

- Hallucination detection is particularly challenging—fluent but hallucinated content may escape notice without source comparison

- Human review effectiveness depends on reviewer training, time allocation, and domain expertise

Human Parity: Myth or Reality?

The "human parity" claim has generated substantial research interest and debate. This section examines the evidence, definitions, and critiques surrounding parity claims.

Defining "Human Parity"

"Human parity" lacks a universally accepted definition, contributing to debate over claims. Research literature reveals several interpretations:

- Statistical Indistinguishability: AI and human translations receive equivalent average scores in blind evaluation (Hassan et al., 2018 definition)

- Interchangeability: AI output is functionally equivalent to human translation for intended use cases

- Optimal Performance: AI achieves ceiling performance—further improvements would not meaningfully enhance quality

- Single-Aspect Parity: Parity on specific metrics (adequacy, fluency) without claiming overall equivalence

These definitional differences matter because a system achieving "parity" by one definition may fall short by another. Hassan's statistical indistinguishability, for example, does not imply that AI translations are as good as the best human translations— only that they're not reliably distinguishable from average professional quality in blind tests.

Human Parity Experiments

Rigorous human parity experiments follow specific protocols to minimize bias and maximize validity:

Blind Evaluation Protocol: Professional translators evaluate translations without knowing whether source is AI or human, rating quality on standardized scales. Statistical analysis determines whether quality differences are detectable.

Statistical Significance: Parity claims require statistical testing showing no significant quality difference. However, "not significantly different" is not equivalent to "equal"—studies may lack power to detect small but meaningful differences.

Critiques of Parity Claims

Academic critiques of human parity claims (Koehn, 2020; Freitag et al., 2021) identify several concerns:

- Evaluator Bias Issues: Professional translators may not represent end-user quality needs; their evaluation criteria may emphasize aspects end-users don't value

- Limited Test Sets: Parity claims often rest on narrow test sets (news commentary) that favor data-rich general domains

- Professional vs. Amateur Translators: Studies sometimes compare AI to average translation rather than expert, domain-specialized human translation

- Quality Definition Narrowness: Parity claims focus on adequacy and fluency while neglecting error types (hallucinations, cultural adaptation) that matter for real-world use

- Temporal Validity: Parity achieved on 2018 test sets may not hold for 2024 content with different terminology and concepts

Freitag et al. (2021) re-evaluated Microsoft's human parity claim using stricter methodology and found that while quality was high, parity was not definitively established when accounting for confidence intervals and multiple quality dimensions.

Current Consensus (2024)

Based on accumulated research evidence, the 2024 consensus on human parity can be summarized:

- Where Parity Exists: High-resource language pairs (English-French, English-German, English-Spanish) in general-domain news and technical content show statistical parity or near-parity in blind evaluations

- Where Gaps Remain: Low-resource languages, creative/literary content, legal/medical high-stakes domains, and culturally-adaptive translation show clear human superiority

- Conditional Parity: Even where parity is claimed, it is conditional on appropriate use cases, post-editing availability, and quality tolerance levels

- Ongoing Progress: LLMs (GPT-4, Claude) have pushed parity boundaries, but fundamental gaps on challenging linguistic phenomena persist

Post-Editing Research: The Hybrid Reality

The practical reality of AI translation deployment is not AI vs. human but AI + human through post-editing workflows. Research on Machine Translation Post-Editing (MTPE) demonstrates the effectiveness and limitations of this hybrid approach.

Machine Translation Post-Editing (MTPE) Studies

Extensive research literature examines MTPE productivity and quality outcomes:

Productivity Gains: Meta-analyses (e.g., Cadwell et al., 2018) of MTPE studies show consistent productivity improvements:

- Average productivity gain: 30-40% across all domains and language pairs

- High-resource pairs, technical domains: 60-100% gains

- Low-resource pairs, creative domains: 10-20% gains (sometimes negative)

- Time savings: 25-50% reduction in translation time for equivalent quality output

Quality Outcomes: Research by Green et al. (2013), Koponen et al. (2020), and others demonstrates that MT-PE output often matches or exceeds human-only translation quality:

- Combined speed of MT with human accuracy on complex judgments

- Post-editors catch AI errors while preserving AI strengths (fluency, consistency)

- Quality ceiling higher than either MT-only or human-only in time-limited scenarios

PE Effort Prediction: Research has developed models predicting post-editing effort from source text and MT output features (Specia, 2011; e.g., sentence complexity, MT confidence scores, terminology density). These models enable workflow optimization—routing high-effort content to human translation and low-effort content to light post-editing.

Light vs Full Post-Editing

Research distinguishes two MTPE approaches with different quality-efficiency tradeoffs:

- Minimal intervention: only critical errors (meaning-changing mistranslations)

- Goal: Acceptable quality for time-sensitive, low-visibility content

- Typical time: 20-40% of human translation time

- Quality outcome: Below human-only, but adequate for gisting/internal use

- Comprehensive revision: all errors including stylistic and preferential

- Goal: Human-equivalent quality for published content

- Typical time: 50-75% of human translation time

- Quality outcome: Equivalent to human translation with efficiency gains

Studies (e.g., O'Brien et al., 2014; Vieira, 2016) demonstrate that LPE achieves 50-60% time savings but produces quality 10-20% below human-only, while FPE achieves 25-40% savings with equivalent quality.

Post-Editor Skills and Training

Research reveals that post-editing requires distinct skills from translation, driving specialized training needs:

- Error Pattern Recognition: Post-editors must quickly identify AI-typical errors (hallucinations, coreference failures) that differ from human translation patterns

- Efficiency Optimization: Judgment about which errors to fix (critical vs. preferential) under time constraints

- MT System Familiarity: Understanding MT limitations by system and language pair to anticipate errors

- Cognitive Load Management: Dealing with "stilted" MT output that requires more cognitive effort to revise than natural text

Training effectiveness research (e.g., Koponen, 2012; do Carmo, 2018) shows that post-editors with specialized training achieve 15-25% higher productivity than translators adapting to post-editing without training.

Quality of MT-PE vs Human-Only Translation

The critical question for enterprise deployment is whether MT-PE achieves quality equivalent to human translation. Research findings are generally positive:

- Studies by Green (2014), Plitt & Masselot (2010), and others show MT-PE output matches human-only translation quality in blind evaluation

- Some studies (e.g., Guerberof, 2012) show MT-PE slightly exceeds human-only quality, possibly due to combined AI fluency with human accuracy

- Quality equivalence depends on: MT system quality, post-editor skill, domain appropriateness, and time allocation

- Low-resource pairs and creative domains show less favorable MT-PE outcomes

Quality Metrics Correlation Analysis

A fundamental research question is whether automatic metrics reliably predict human translation quality judgments. Metric validation studies examine this correlation.

Do Automatic Metrics Predict Human Judgment?

Research on metric reliability (e.g., Bojar et al., annual WMT metrics tasks; Mathur et al., 2020) provides correlation coefficients between automatic metrics and human Direct Assessment scores:

- BLEU: 0.25-0.45 (varies by language pair)

- chrF: 0.35-0.55

- TER: 0.30-0.50

- BERTScore: 0.50-0.70

- COMET: 0.65-0.80

- GEMBA (GPT-4): 0.75-0.85

These correlations indicate that: (1) Neural metrics (COMET, BERTScore, GEMBA) substantially outperform n-gram metrics; (2) Even the best metrics have imperfect correlation (r=0.85 leaves 27.75% of variance unexplained); (3) Metric reliability varies by domain and language pair.

BLEU vs Human Quality: Known Limitations

Extensive research documents BLEU's limitations as a human quality predictor (Thompson & Koehn, 2020; Callison-Burch et al., 2006):

- BLEU correlation with human judgments ranges from r=0.3 to r=0.7 depending on test conditions—too low for reliable quality assessment

- BLEU is particularly unreliable for: low-resource pairs (where reference quality may be poor), creative content (where valid variation is high), and comparing systems of similar quality (where BLEU's granularity is insufficient)

- BLEU scores are not comparable across language pairs—a BLEU of 35 for English-French represents different quality than BLEU 35 for English-Chinese

COMET and Neural Metrics: Improved Reliability

Neural metrics address many BLEU limitations, as documented in validation studies:

- COMET's Kendall tau correlation of 0.42-0.58 with segment-level DA substantially exceeds BLEU's 0.25-0.35

- COMET better handles synonymy and paraphrase through embedding-based similarity

- System-level correlation (ranking multiple systems) is particularly strong, supporting COMET's use in competitive evaluation

- Limitations remain: COMET is reference-dependent, computationally expensive, and shows reduced reliability for low-resource pairs with limited training data

Multi-Metric Approaches

Research suggests that ensemble approaches combining multiple metrics improve reliability:

- Using BLEU + COMET + BERTScore together captures different quality dimensions

- WMT evaluations now report multiple metrics alongside human judgments

- Some research (e.g., Specia et al., 2018) combines automatic metrics with quality estimation for improved prediction

Economic and Practical Implications

Research on AI translation quality has direct economic implications for translation buyers, language service providers, and translator professionals. Quality-cost-time tradeoffs drive practical deployment decisions.

Quality vs Cost Trade-offs

Research on translation economics examines acceptable quality thresholds for different use cases:

- Gisting/Information Extraction: 70-80% accuracy sufficient; raw MT acceptable without post-editing

- Internal Communication: 85-90% accuracy; light post-editing or qualified MT sufficient

- Published Content: 95-98% accuracy; full post-editing or human translation required

- Legal/Medical/Regulatory: 99%+ accuracy; human translation with specialized review essential

Studies demonstrate that AI translation reduces costs by 40-70% for content where AI quality is adequate, while maintaining or improving quality through MTPE workflows. However, deploying AI for inappropriate content types can increase costs through extensive post-editing requirements or quality failures requiring rework.

AI-Human Workflow Optimization

Research-informed workflow optimization uses quality prediction to route content appropriately:

- Quality Estimation Routing: Automated QE predicts translation difficulty, routing high-confidence content to light PE and low-confidence to full PE or human translation

- Domain-Based Routing: Technical content → MTPE; Legal/Medical → Human-first with MT as reference

- Quality Gates: Automated checks flag potential errors for human review, concentrating attention on high-risk segments

Time-to-Quality Research

Research examines the speed vs. accuracy trade-off in translation workflows:

- Raw MT: Immediate output, 70-95% quality (language-dependent)

- Light Post-Editing: +20-40% time, +10-20% quality improvement

- Full Post-Editing: +50-75% time, achieves human quality

- Human Translation: 100% baseline time, variable quality based on translator skill

Current State of the Art (2024-2025)

Recent research on state-of-the-art systems (GPT-4, Claude, Gemini, specialized NMT) documents the current frontier of AI translation capability.

GPT-4/GPT-4o Translation Quality

Recent studies (2023-2024) on GPT-4 translation capabilities reveal:

- GPT-4 achieves COMET scores competitive with Google Translate and DeepL on high-resource pairs (English-French, English-German)

- Few-shot prompting (providing translation examples in context) improves GPT-4 quality by 5-15% on specialized domains

- In-context learning enables GPT-4 to handle low-resource languages better than dedicated NMT systems for some pairs

- Error patterns differ: GPT-4 produces more fluent output but occasionally hallucinates or over-translates

- Cost considerations: GPT-4 translation is 10-50x more expensive per word than dedicated MT APIs, limiting scale

Specialized NMT Systems

Domain-adapted and specialized NMT systems show continued advancement:

- Domain Adaptation: Fine-tuning on domain-specific corpora (legal, medical, technical) improves quality by 10-20% on in-domain test sets

- Terminology Integration: Constrained decoding and terminology databases improve terminology accuracy by 25-40%

- Custom Training: Enterprises with large parallel corpora can train custom models exceeding generic system quality

Multimodal Translation

Emerging research examines multimodal translation combining speech, text, and visual context:

- Speech-to-Speech Translation: Systems like Google Translatotron, SeamlessM4T enable real-time spoken translation with 15-25% quality degradation compared to text-to-text

- Visual Context: Multimodal models incorporating images show improved translation of visually-grounded content (product descriptions, scene descriptions)

Real-time Translation Quality

Research on simultaneous interpretation AI (e.g., from Kites, Wordly, and research labs) documents the latency-accuracy trade-off:

- Higher latency (waiting for sentence completion) improves quality; real-time word-by-word translation shows 20-30% quality degradation

- Quality sufficient for information extraction but not yet competitive with human simultaneous interpretation for high-stakes contexts

Future Research Directions

Active research areas that will shape the next generation of AI translation quality assessment and improvement.

Quality Estimation (QE)

Quality estimation research aims to predict translation quality without reference translations, enabling real-time workflow decisions:

- Word-Level QE: Predicting which words/phrases are likely mistranslated

- Sentence-Level QE: Overall quality scores for routing decisions

- Confidence Scoring: Calibration of model confidence to actual error probability

- Research Challenges: QE correlation with human judgments remains lower than reference-based metrics; improving QE is an active research priority

Human-in-the-Loop AI

Interactive translation systems that incorporate real-time human feedback represent an emerging research direction:

- Interactive Translation Prediction (ITP): Systems that predict and suggest completions as translators type, incorporating human choices into model updates

- Real-time Adaptation: Systems that adapt to individual translator preferences and corrections during translation sessions

Continual Learning

Research on systems that learn from corrections and improve over time:

- Error Correction Learning: Updating models based on post-editor corrections to reduce repeated errors

- Personalization: Adapting to individual user preferences and terminology choices

- Research Challenges: Preventing catastrophic forgetting while incorporating new knowledge; balancing personalization with generalization

Evaluating Evaluators

Meta-research on evaluation methodology itself is an important research direction:

- Inter-Annotator Agreement: Research shows human evaluators disagree significantly on translation quality (Krippendorff's alpha 0.3-0.6), questioning the gold standard assumption

- Expert vs. Crowd Evaluation: Comparing professional translator evaluations with crowd worker and end-user quality perceptions

- Evaluation Bias: Studying systematic biases in human evaluation (anchoring, recency effects, evaluator fatigue)

Practical Recommendations Based on Research

Synthesizing research evidence into actionable guidance for translation decision-making.

When to Trust AI Translation (Research-Backed Guidelines)

Research supports AI translation deployment when:

- Language pair is high-resource (English-French, English-German, English-Spanish, English-Portuguese)

- Domain is general news, technical documentation, or information content without specialized terminology requirements

- Content does not require certified translation or carry legal liability

- Quality requirement is 85-95% accuracy (not 99%+)

- Post-editing resources are available for quality assurance

- Error tolerance allows occasional hallucinations or mistranslations

When Human Translation is Essential

Research evidence supports human translation when:

- Content requires certified translation (immigration, legal, regulatory)

- Legal liability attaches to translation accuracy (contracts, patents, compliance)

- Life-safety implications exist (medical records, pharmaceutical documentation, patient instructions)

- Creative and literary quality is paramount (marketing, literature, brand voice)

- Cultural adaptation is required beyond literal translation

- Language pair is low-resource with documented 30-50% quality gaps

- Source text contains significant ambiguity requiring contextual judgment

Hybrid Approach Best Practices

Research-informed MTPE workflow optimization:

- Use quality estimation to route content: high-confidence to light PE, low-confidence to full PE or human translation

- Train post-editors on AI error patterns—different skills than traditional translation

- Implement quality gates: automated checks for numbers, terminology, and high-risk segments requiring human attention

- Monitor post-editing effort—if PE time approaches human translation time, switch to human-first workflow

- Collect and analyze post-editing data to continuously improve AI system selection and configuration

Quality Assurance by Domain

Domain-specific QA protocols based on error pattern research:

- Technical: Verify terminology consistency, check for hallucinated product names or specifications

- Legal: Full human review mandatory; check for misinterpretation of performative language, numbers, and dates

- Medical: Expert review by medical professionals; verify drug names, dosages, contraindications

- Marketing: Cultural review by target-market natives; check humor, idioms, and cultural references

Limitations of Current Research

Critical examination of research limitations that temper conclusions and suggest areas for methodological improvement.

Publication Bias

Research on AI translation quality faces inherent publication bias:

- Technology companies have incentives to publish positive results while negative findings may go unreported

- "Human parity" claims generate media attention, encouraging headline-seeking framing

- Failed experiments and quality failures are rarely documented in research literature

- Independent academic research provides valuable counterbalance but may lack access to production-scale systems

Evaluation Scope Limitations

Research evaluation scope faces several constraints:

- Test Set Bias: Most research uses news and web content test sets that favor data-rich general domains

- Language Coverage Gaps: The majority of research focuses on major European and East Asian languages; research on African, South Asian, and Indigenous languages is limited

- Short-Text Focus: Sentence-level evaluation dominates, while document-level coherence receives less attention

- Static Evaluation: Research evaluates on static test sets, while real-world content evolves continuously

The Evolving Landscape

Research faces inherent lag behind rapidly evolving technology:

- GPT-4 (released 2023) and subsequent LLMs have changed the landscape faster than academic research can evaluate

- Findings from 2020 may not apply to 2024 systems with different architectures and capabilities

- Academic peer review timelines (months to years) are misaligned with industry release cycles (weeks to months)

Conclusion and Key Takeaways

This comprehensive research synthesis reveals a nuanced picture of AI vs human translation accuracy that defies simple conclusions.

Summary of Research Consensus

Based on analysis of 50+ major research studies, the evidence supports the following conclusions:

- Conditional Parity Achieved: For high-resource language pairs (English-French, English-German, English-Spanish) in general domains (news, technical), AI translation achieves quality within 2-5% of professional human translation, with some studies supporting "human parity" claims under specific conditions

- Persistent Gaps: Low-resource languages (30-50% quality gaps), high-stakes domains (legal, medical), and creative content show clear human superiority that AI has not closed

- Error Pattern Differences: AI and humans make different errors—AI produces more meaning-altering mistranslations and hallucinations, while humans produce more fluency variations and fatigue-related errors

- MTPE Effectiveness: Machine translation post-editing achieves 30-100% productivity gains with quality matching or exceeding human-only translation, representing the practical path forward for most enterprise use cases

- Metric Evolution: Neural metrics (COMET, BERTScore) substantially outperform n-gram metrics (BLEU) in predicting human quality judgments, enabling more reliable automatic quality assessment

AI Capabilities and Limitations

AI translation systems demonstrate remarkable capabilities within their operational boundaries while exhibiting fundamental limitations at boundaries:

- Strengths: Speed, consistency, handling of repetitive technical content, preservation of complex syntactic structures, 24/7 availability, cost efficiency at scale

- Limitations: Hallucinations, context window constraints, cultural adaptation failures, ambiguity resolution, creative recreation, accountability and certification requirements

Human Translator Enduring Value

Research confirms that human translators provide essential value that AI cannot replicate:

- Accountability and certification for legal/regulatory requirements

- Cultural competence and creative adaptation for marketing and literary content

- Ambiguity resolution and contextual judgment for complex source texts

- Quality assurance through post-editing of AI output

- Ethical responsibility for high-stakes content (medical, legal, safety-critical)

Evidence-Based Decision Framework

The research evidence supports a decision framework based on content characteristics rather than blanket AI vs. human choices:

- Raw MT: Gisting, information extraction, internal communication where speed outweighs perfection

- Light Post-Editing: Time-sensitive published content where 90-95% quality is acceptable

- Full Post-Editing: Most enterprise content requiring human-equivalent quality with efficiency gains

- Human Translation: Certified, legal, medical, creative content where AI limitations create unacceptable risk

Call for Continued Research

The rapid evolution of AI translation technology demands continued research investment:

- Independent evaluation of commercial systems using rigorous methodology

- Low-resource language quality assessment and improvement

- Long-document and discourse-level quality evaluation

- Hallucination detection and mitigation research

- Quality estimation advancement for real-time workflow optimization

- Human-AI collaborative translation effectiveness

Final Assessment: The research evidence supports neither triumphalist claims that AI has replaced human translators nor defeatist assertions that AI offers no value. The empirical reality is more nuanced: AI translation has achieved remarkable quality for specific use cases while retaining fundamental limitations that ensure continued human relevance. The future belongs not to AI or humans alone, but to intelligent integration that leverages AI speed and consistency with human judgment and cultural competence—evidenced by the 30-100% productivity gains documented in post-editing research. Translation buyers who understand these evidence-based boundaries will optimize both quality and efficiency, while those who ignore research findings risk quality failures or unnecessary costs.

References and Research Sources

This analysis synthesizes findings from the following major research sources:

Bojar, O., et al. (Annual). Proceedings of the Conference on Machine Translation (WMT). Association for Computational Linguistics.

Cadwell, P., et al. (2018). Human factors in machine translation and post-editing: Industry practices and research findings. Translation Spaces, 7(1), 2-23.